Get answers from our community of experts in record time.

Join now- Technical Forums

- :

- Switching

- :

- Re: Port STP change designated→disabled; Port status change old: 1Gfdx, ne...

Port STP change designated→disabled; Port status change old: 1Gfdx, new: down

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Port STP change designated→disabled; Port status change old: 1Gfdx, new: down

Yesterday at 15:10 one of our Meraki MS220 switches decided to disable all the ports connecting to MR34 wifi antennas. With the result that all antennas were powered off.

In the logs I can see for every port on the switch:-

Port STP change Port x designated→disabled

Port status change port: x, old: 1Gfdx, new: down

Why would STP disable all the ports on the switch at the same time? No other switches are affected, just this one.

I rebooted the switch and the ports were enabled again.

This switch has been working fine for 5 years.

Meraki support say:

"Once a devices has been rebooted it loses its logs.... we need to see the switch in its failing state to identify the cause"

Which isn't much help when you have 1000 students and 200 staff all relying on the wifi.

Any ideas?

Thanks,

Richard.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did it only disable the ports connected to APs or all of the ports?

What are the port configurations of the failing ports? Access/trunk? (R)STP on/off? Any STP guard functionality enabled?

Have you tested just cycling one of the failing ports instead of rebooting the whole switch?

What's your PoE budget like?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great questions!

This only happend to the ports connected to AP's. The switch is only used for AP's, but none of the unused ports were disabled.

All ports are configured as a trunk port; RSTP: enabled; STP Guard: disabled.

POE: enabled

Link: Autoneg

Schedule: unscheduled

Isolation: disabled

Type: Trunk

Native VLAN 201

Allowed VLANs: all

Trusted: Disabled

UDLD: Alert only

No, I didn't think to power cycle one port. I will do that if it happens again.

POE budget:

Consumption: 161.4 W / 370 W

Budgeted: 480 W / 370 W

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There were issues in the past where the power supply became mis-identified by the switch. I think they resolved it via Firmware upgrades.

Check your event logs on the switches for "PoE budget changes"

Something like "PoE budget change PoE budget changed from 740W to 0W"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes, which firmware do you run?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Indeed, @Ben is probably on to something. See this post with someone seemingly experiencing the same issue as you: https://community.spiceworks.com/topic/453087-meraki-switches-randomly-dropping-poe-budget-to-0?page...

This was @merakisimon 's response back then. This dates back to december '16 though. What's the software version on your switch?

Also I noticed that you are over the PoE budget on your switch. Strictly speaking your MR34's use 18W max. That means a max of 20 APs per switch on a 370W switch. It seems like you have 26 connected.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have 16 MR34 AP's connected to our MS-220 switch. Most are using 10.x w, a total of 184 W accoring to my math.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There's nothing wrong with your math. But the max expected power usage of the AP is 18W as per the datasheet. That's also why the dashboard shows that 480W of the available 370W has been budgetted (reserved). As long as the actual usage doesn't exceed the 370W you'll likely be fine.

I don't think it's related to this problem though. If it were, the behavior would be different. It would just turn of APs starting from those connected to the highest port number until it is within budget.

Note: If the PoE budget is exceeded, PoE power will be disbursed based on port count. In the event the budget is exceeded, PoE priority favors lower port numbers first.(e.g. with all 24 ports in use on an MS22P, if the budget is exceeded and a device requests 6W of power on port1, PoE on port 24 will be disabled.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the explanation BrechtSchamp, that's more than I could find in Meraki's documentation!

I suspect a bug in the firmware. We will wait and see what happens after a firmware upgrade...

Richard.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am currently seeing the same issue on my MS220-8P.

I have a very easy setup - a router -> Switch -> AP.

Apart from this, I have 2 end devices connected to the switch.

---

Apr 9 22:57:34 Port STP change Port 8 disabled→designated

Apr 9 22:57:34 Port status change port: 8, old: down, new: 100fdx

Apr 9 22:56:11 Port STP change Port 8 designated→disabled

Apr 9 22:56:11 Port status change port: 8, old: 100fdx, new: down

Apr 9 22:56:08 Port STP change Port 8 disabled→designated

Apr 9 22:56:08 Port status change port: 8, old: down, new: 100fdx

Apr 9 22:56:07 Port STP change Port 8 designated→disabled

Apr 9 22:56:07 Port status change port: 8, old: 100fdx, new: down

Apr 9 22:55:51 Port STP change Port 8 disabled→designated

Apr 9 22:55:51 Port status change port: 8, old: down, new: 100fdx

Apr 9 22:55:26 Port STP change Port 7 disabled→designated

Apr 9 22:55:26 Port status change port: 7, old: down, new: 100fdx

Apr 9 22:55:17 Port STP change Port 7 designated→disabled

Apr 9 22:55:16 Port status change port: 7, old: 100fdx, new: down

Apr 9 22:55:10 Port STP change Port 7 disabled→designated

Apr 9 22:55:10 Port status change port: 7, old: down, new: 100fdx

Apr 9 22:51:39 Port STP change Port 8 designated→disabled

Apr 9 22:51:39 Port status change port: 8, old: 100fdx, new: down

---

One endpoint recently connected to port 7, AP is on port 1, another endpoint connected on port 6. Port 8 is connected to the router that connects to the rest of the world...

Let me know if you need more information from me.

Kind Regards,

Emanuil Svetlinski

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you also using firmware version 10.35? Or are you with the latest version?

I suspect it's a bug in the firmware.

Since i upgraded I didn't have this problem anymore (but then I only had it on 1 switch in the past 5 years anyway) 😜

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@AnonymousI am using MS 10.45 for the switch and MR 25.13 for the AP...

What versions are you using?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@svetlinski I am running the same firmware and having the same issue. Do you know if anyone has determined the cause and developed a fix or work around?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I haven't had this issue again, since upgrading the firmware.

This just confirms my suspicion: if you want reliability, don't choose Meraki. Go with traditional Cisco.

If you want cool new functions and cloud management, choose Meraki. But be aware they are not an Enterprise suitable company.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It appears the bug is in MS 11.17 firmware as well. Updated all our switches this past weekend. Since then I have had ports disabled randomly by LACP. I was able to fix that issue by selecting the port down and the next one beside it, then aggregation then switch those ports back.

Yesterday and today I had two switches become unreachable and they both were experiencing the Port STP change designated>disabled.

I had to reboot both of those switches in order to get the ports back up. So it's obvious Meraki hasn't fixed the problem from the previous versions of the firmware.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have the same version of code 10.45 on MS-225 switch but still see these messages in event log.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Same issue on 2 MS220/2 MS225. Contacted support and they haven't seen anything like it before. Updated to 10.45, checked power supplies, isolated ports, disabled CDP/LLDP, all at the request of Meraki. Still occuring on all POE devices. At least I know I'm not the only one.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can refer them to my case if that helps:-

Case 03656213

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, I'll add that to my case and hopefully it will be resolved

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks... curious what was the resolution in your case ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No resolution to the case as of yet. The port STP changes are still occuring. It's fine with the PCs on the switches but it's killing our POE devices.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interesting reading through this issue. I have been seeing some similar issues, yet across a fiber connection not with clients or poe devices. Meraki is stumped.

I looked through known bugs and fixes for the firmware version you have mentioned however I am not see this issue listed. How are you sure this is a firmware problem?

Also, what was the resolution to your Case 03656213?

Thanks in advance

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

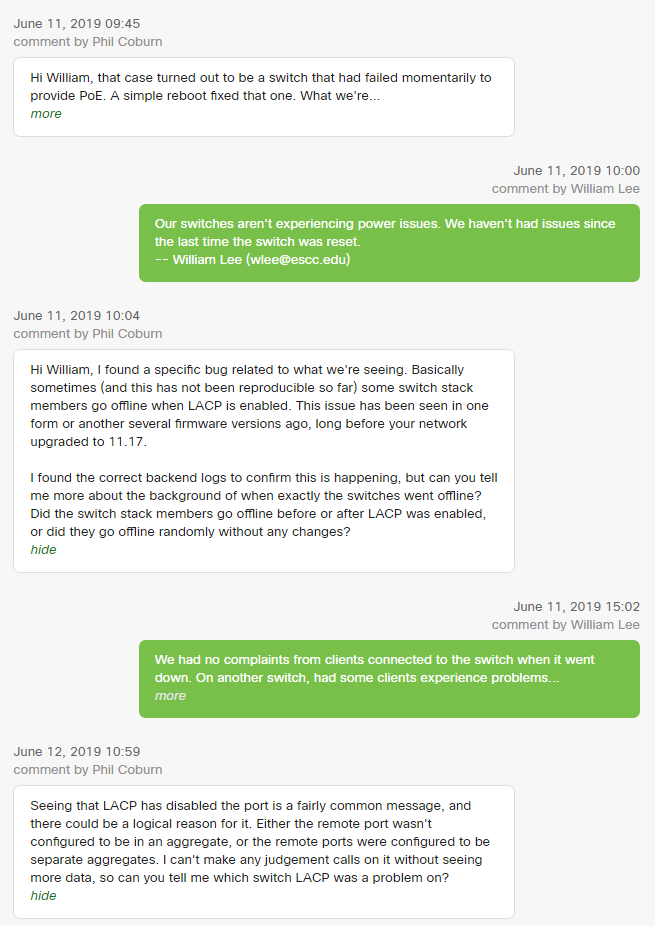

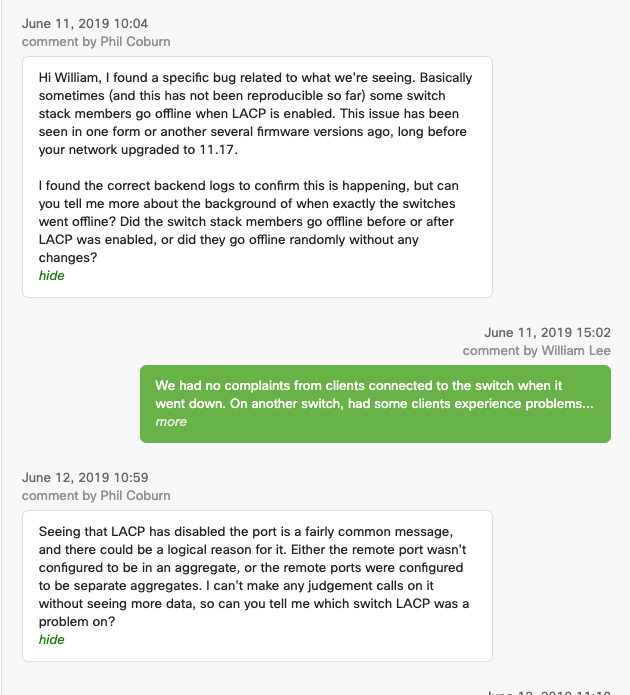

My most recent conversation about his issue. Obviously, it's something they can't figure out.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Right now, the only thing we have been able to figure out is that our POE phones have LLDP turned on and the switches also have LLDP turned on. This could possibly cause a mismatch and make the devices reboot. Really digging deep for this one.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Vince, did Meraki ever get back to you with a solution to this in a firmware fix. We are no experiencing similar behaviour with our IP Telephones, Cisco 8851's. We are using MS250's with 11.22 firmware. I am considering disabling the LLDP on the phones per your post to see if this helps. But wanted to know if you were still doing this and if it was still resolving the issue so far.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ours just stopped doing it two weeks ago. Nothing has changed on our network. So, either Meraki fixed it on their end without telling me or it fixed itself.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you tell me the software version your meraki switches are on?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We are currently running MS 11.22

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, @Enterprise_IT,

Has the issue stopped completely with he new firmware? DO you have any stack running L3 in the environment? Would really appreciate the feedback.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We do have several stacks running L3, however it seems the problem resolved itself. We are still running the same firmware and haven't done any network changes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can't find any POE Budget Changes in the logs.

We are using firmware version 10.35. I don't see any bug fixes after 10.35 for PoE.

I will schedule a firmware upgrade to the latest stable version at the weekend, just to be sure.

Thanks for your suggestions!

Richard.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have an 8-port MS220 connected to an MR18 powered by POE and am experiencing the same things you have described for the past week. I updated everything last weekend and had good service for about a week, but had more issues this morning. The logs show the STP message that you shared. It seems very strange that Meraki isn't able to identify the issue.

I only have the single MR18 POE device on my MS220. Other devices are one dedicated wired ports. This is my home network.

Please let me know if any resolution is identified.

Thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would like to clarify the distinction between the STP event and the link event to remove any confusion.

When a switch port experiences a loss of PHY (link goes down), it is at this point that STP also "disables" the port. In other words, the meaning of these events is not that the port is being disabled by STP. The meaning is that as a result of the port going down, the STP state changes to disabled.

Even if the event log ordering may be reversed, this is still the case. This may not explain why a port goes down, only to clarify that it's not going down because of any STP state -- the STP change is itself a result of the loss of link status.

This does not explain all cases but also be aware many devices have various power save and optimizations present on the Ethernet side and may result in downgrade or link speed or bringing a link down for periods of time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Andrew. This still doesn't explain why all the ports on one switch would disable at the same time. Especially since all the other switches in the building (6 x MS220) had no effect, even though they are all also connected to MR34's.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

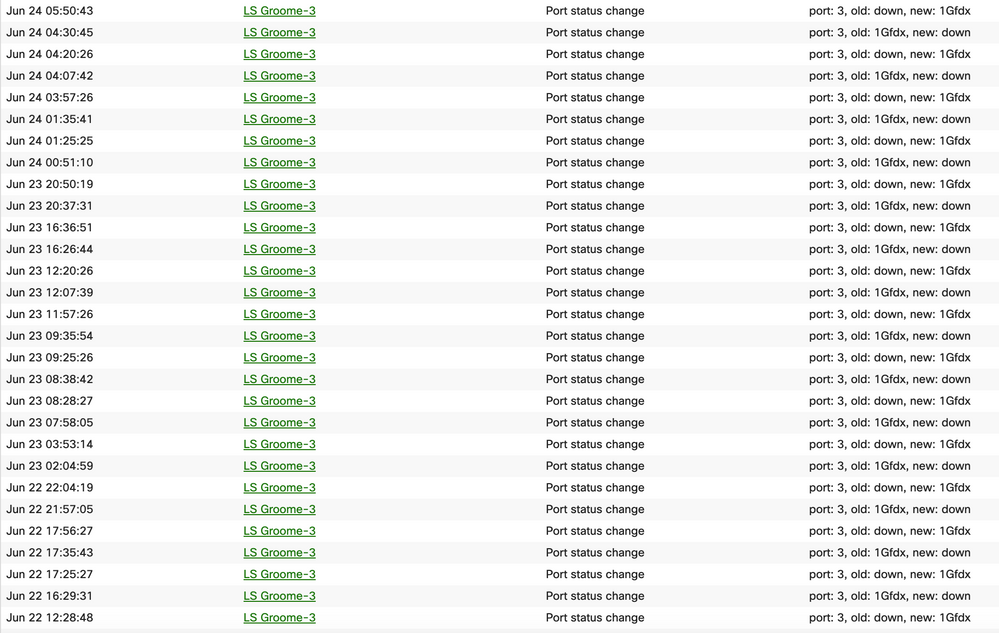

Im experiencing the same issue on my MS-350-48LP. A single port on the switch keeps changing status (Attached Image). We have a copier plugged into this port. Not sure if anyone has found a solution. Please advise.

My switch is updated to MS 10.45

This is the Port Config:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@SchofieldT wrote:Im experiencing the same issue on my MS-350-48LP. A single port on the switch keeps changing status (Attached Image). We have a copier plugged into this port. Not sure if anyone has found a solution. Please advise.

My switch is updated to MS 10.45

This is the Port Config:

Switchport: LS Groome-3 / 3Name:CopierPort enabled: EnabledPoE: EnabledType: AccessAccess policy: OpenVLAN: 1Voice VLAN: 500Link:Auto negotiateRSTP: EnabledSTP guard: DisabledPort schedule: UnscheduledPort isolation:DisabledTrusted:DisabledUnidirectional link detection (UDLD): Alert onlyAlerts will be generated if UDLD detects an error, but the port will not be shut down.

It's not a classic case of the cleaning lady unplugging it for her vaccuum is it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm experiencing essentially the same issue on an MS320-48 (firmware is up-to-date

| Description | Access port on VLAN 10 |

| Tags | OFC |

| Access policy | Open |

| Link negotiation | Auto negotiate (10 Mbps) |

| RSTP | Forwarding |

| Port schedule | Unscheduled |

| Isolation | Disabled |

| UDLD | Alert only |

| Port mirroring | Not mirroring traffic |

| Description | Access port on VLAN 1 |

| Tags | OFC |

| Access policy | Open |

| Link negotiation | Auto negotiate (100 Mbps) |

| RSTP | Forwarding |

| Port schedule | Unscheduled |

| Isolation | Disabled |

| UDLD | Alert only |

| Port mirroring | Not mirroring traffic |

| Jun 25 07:28:01 | 48-Port Main Switch | Port STP change | Port 9 disabled→designated | |

| Jun 25 07:28:01 | 48-Port Main Switch | Port status change | port: 9, old: down, new: 10fdx | |

| Jun 25 07:27:54 | 48-Port Main Switch | Port STP change | Port 9 designated→disabled | |

| Jun 25 07:27:54 | 48-Port Main Switch | Port status change | port: 9, old: 10fdx, new: down | |

| Jun 25 07:27:54 | 48-Port Main Switch | Cable test | ports: 9, disrupted_ports: 9 | |

| Jun 25 07:27:39 | 48-Port Main Switch | Port STP change | Port 4 disabled→designated | |

| Jun 25 07:27:39 | 48-Port Main Switch | Port status change | port: 4, old: down, new: 100fdx | |

| Jun 25 07:27:21 | 48-Port Main Switch | Port STP change | Port 4 designated→disabled | |

| Jun 25 07:27:21 | 48-Port Main Switch | Port status change | port: 4, old: 100fdx, new: down | |

| Jun 25 07:27:21 | 48-Port Main Switch | Cable test | ports: 4, disrupted_ports: 4 | |

| Jun 24 20:21:45 | 48-Port Main Switch | Port STP change | Port 9 disabled→designated | |

| Jun 24 20:21:45 | 48-Port Main Switch | Port status change | port: 9, old: down, new: 10fdx | |

| Jun 24 20:21:41 | 48-Port Main Switch | Port STP change | Port 9 designated→disabled | |

| Jun 24 20:21:41 | 48-Port Main Switch | Port status change | port: 9, old: 10fdx, new: down | |

| Jun 24 20:21:40 | 48-Port Main Switch | Port STP change | Port 9 disabled→designated | |

| Jun 24 20:21:40 | 48-Port Main Switch | Port status change | port: 9, old: down, new: 10fdx | |

| Jun 24 20:21:38 | 48-Port Main Switch | Port STP change | Port 9 designated→disabled | |

| Jun 24 20:21:38 | 48-Port Main Switch | Port status change | port: 9, old: 100fdx, new: down | |

| Jun 24 17:21:43 | 48-Port Main Switch | Port STP change | Port 9 disabled→designated | |

| Jun 24 17:21:43 | 48-Port Main Switch | Port status change | port: 9, old: down, new: 100fdx | |

| Jun 24 17:21:39 | 48-Port Main Switch | Port STP change | Port 9 designated→disabled | |

| Jun 24 17:21:39 | 48-Port Main Switch | Port status change | port: 9, old: 100fdx, new: down | |

| Jun 24 17:01:45 | 48-Port Main Switch | Port STP change | Port 8 designated→disabled | |

| Jun 24 17:01:45 | 48-Port Main Switch | Port status change | port: 8, old: 100fdx, new: down | |

| Jun 24 14:37:17 | 48-Port Main Switch | Port STP change | Port 24 designated→disabled | |

| Jun 24 14:37:17 | 48-Port Main Switch | Port status change | port: 24, old: 1Gfdx, new: down | |

| Jun 24 12:29:58 | 48-Port Main Switch | Port STP change | Port 5 disabled→designated | |

| Jun 24 12:29:58 | 48-Port Main Switch | Port status change | port: 5, old: down, new: 10fdx | |

| Jun 24 12:29:56 | 48-Port Main Switch | Port STP change | Port 5 designated→disabled | |

| Jun 24 12:29:56 | 48-Port Main Switch | Port status change | port: 5, old: 100fdx, new: down |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I wonder if this is related to:

Have you guys got support tickets open?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So far, what seems to be working is that we have turned off LLDP on the devices. Our POE phones had LLDP turned on and the switch also has LLDP turned on. It's causing a wattage conflict and it reboots the phones. We are letting the switch handle all LLDP request and so far so good. Hopefully, this is the fix! fingers crossed

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Another change we made was changed we have made is in the port configuration, we removed the Voice VLAN setting. Before this change, our POE devices were running at about 2.7W. After the change, they are up to 4W. This seems to have been restricting the proper amount of wattage from getting to the POE devices.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you make the port change and the LLDP changes simultaneously, then saw improved POE behavior, or did you do so at separate times? Curious so I can keep an eye out for this at my clients.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did them simultaneously to minimize disruption to the end user. Changing the VLAN on the switch will cut the connection to the device momentarily. We are still in testing with this and have found it won't work on daisy chained device (Phone > Computer). You can still change the device's settings without affecting anything else, just don't change the switch.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have got MS225-48LP / MS120-8P / MS220-48LP switches with Firmware 10.45.

There are a lot of Avaya IP-phones and Cisco and Meraki accesspoint connected.

Non of the has any problems with PoE or LLDP .

all phones are running in a voice-VLAN and PCs connected to the phone are running in a client VLAN.

Its working well.

Only some printers and Apple Macs are changing the link speed all the time. The users can work without any issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey just wondering if this fixed your issue we have a few IP phones in the network that are having disconnect issues. I am planning on trying to turn off the LLDP on the phone but cannot take the Voice VLAN off as you also mentioned due to it being used.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

After talking with our provider, Mitel, it was suggested that there is an issue where if there is a port scanner that is multi-casting, such as IGMP, turned on then the phones with perceive this as an error. They will reboot to regain the signal. Look at your port packets in the dashboard. Multicast/Broadcast messages on ours are above 10 million a day. Just one suggest of many suggestions we have gotten to fight this thing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

[edit] I really think this is related to the MAP flap issue, or the MAC flap issue is related to this. I'm not convinced these STP messages aren't a product of the port just going down for whatever reason, and not the actual cause.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Just to keep this thread alive . I'm having this same problem with ms220-8p cat6 uplink port to an mx64 8 feet away.. My (home) network is flat so I don't understand why STP changes occur. The cable is fine

MS220 firmware is 11.30

MX64 is 15.20

I didn't see if this was resolved.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Same here, my Case 04772658. The Meraki support rep so far has done nothing but say that these things happen when the port or cable are bad. But there is no way 16 ports on 3 diff switches are bad at the same time. Also this started for me when I updated to MS 11.22 and since then we are seeing short sporadic outages, enough to trigger alerts externally from Pingdom. When these alerts come in, I always see a bunch of UDLD alerts and ports flip-flopping between down and up, SPT from designated to disabled. The UDLD alerts only happen on ports that connect the switches to each other: 4 switches with redundant connections (thus STP is needed and seems to be correctly disabling the redundant links) but why do these 16 ports that happen to be in aggregate groups of 4-8 ports connecting the switches to each other, keep flapping? No useful answer from Meraki 5 days into the ticket.

Example:

| Jan 27 13:15:40 | SW2B | UDLD alert | port: 41, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:15:25 | SW2A | UDLD alert | port: 42, state: bidirectional, port_blocked: false | |

| Jan 27 13:15:19 | SW2B | UDLD alert | port: 44, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:14:47 | SW2A | UDLD alert | port: 42, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:14:46 | SW1B | UDLD alert | port: 44, state: bidirectional, port_blocked: false | |

| Jan 27 13:14:16 | SW2A | UDLD alert | port: 43, state: bidirectional, port_blocked: false | |

| Jan 27 13:14:10 | SW1B | UDLD alert | port: 44, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:13:39 | SW2A | UDLD alert | port: 43, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:13:19 | SW1B | UDLD alert | port: 41, state: bidirectional, port_blocked: false | |

| Jan 27 13:12:43 | SW1B | UDLD alert | port: 41, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:12:30 | SW2B | UDLD alert | port: 42, state: bidirectional, port_blocked: false | |

| Jan 27 13:12:26 | SW2B | UDLD alert | port: 44, state: bidirectional, port_blocked: false | |

| Jan 27 13:11:52 | SW2B | UDLD alert | port: 42, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:11:50 | SW2B | UDLD alert | port: 44, state: unidirectional (outbound fault), port_blocked: false more » | |

| Jan 27 13:11:18 | SW2B | Port STP change | Port AGGR/1 designated→alternate | |

| Jan 27 13:11:18 | SW1B | Port STP change | Port AGGR/1 designated→alternate | |

| Jan 27 13:11:17 | SW2A | Port STP change | Port AGGR/3 designated→root | |

| Jan 27 13:11:17 | SW2A | Port STP change | Port AGGR/2 alternate→designated | |

| Jan 27 13:11:17 | SW2A | Port STP change | Port AGGR/1 root→designated | |

| Jan 27 13:11:17 | SW2A | Port STP change | Port AGGR/2 designated→alternate | |

| Jan 27 13:11:17 | SW2A | Port STP change | Port AGGR/1 designated→root | |

| Jan 27 13:11:16 | SW2B | Port STP change | Port AGGR/1 alternate→designated | |

| Jan 27 13:11:16 | SW1B | Port STP change | Port AGGR/1 alternate→designated | |

| Jan 27 13:11:15 | SW2A | Port STP change | Port AGGR/3 root→designated | |

| Jan 27 13:11:14 | SW2B | Port STP change | Port AGGR/1 designated→alternate | |

| Jan 27 13:11:14 | SW1B | Port STP change | Port AGGR/1 designated→alternate | |

| Jan 27 13:11:13 | SW2A | Port STP change | Port AGGR/1 alternate→designated | |

| Jan 27 13:11:13 | SW2A | Port STP change | Port AGGR/1 designated→alternate | |

| Jan 27 13:11:12 | SW2B | Port STP change | Port AGGR/1 alternate→designated | |

| Jan 27 13:11:12 | SW1B | Port STP change | Port AGGR/1 alternate→designated |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Similar issue, when the port STP changes occur on my uplink port to the WAN it takes the whole network down. It triggers a power supply removal/inserted error eventually like the switch was rebooted (but it physically wasn't)

It's happening at 3 different locations so highly doubtful a real hardware issue and power has been verified to be good.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That must be a bug. Did you already open a case with support?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I will listen in on this ..

We see these A LOT!! (like a zillion times a day) and I should be much mistaken if all the cables/plugs were faulty everywhere in the installation ..

Any news ?

(SW: MS 11.30)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have got the same issue on different LANs with MS225 switches with MS 11.30 software. But only on client-ports not on uplink ports. First I thought it´s an power saving issue on the clients. Apple Mac do have the most issues.

regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @redsector ..

I only have AP's connected to the ports doing this ..

No issues on uplink ports as well as You observe ..

Will be interesting to hear what the Engineers say ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Accesspoints from Cisco (classic) and Cisco Meraki (MR32, MR33, MR34, MR42, MR42E, MR52, MR45) are stable connected (trunk) to my Meraki network ports (software MS11.30). Also the uplink ports (to Cisco classic and to Merak) are stable.

Only clients (access) from Dell and Apple are having this issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This really sounds the same as the MAC flap issue on 11.x.

Or the MAC flap issue is the same as this.

If it's the MAC flap issue then the STP messages are just a byproduct of the port going down. If this is a separate and distinct issue with the BPDUs as someone mentioned then the STP messages are relevant.

I can say that, when I went to 11.22 my Cisco IP phones were going offline like crazy. I have Cisco 3905s and 8811s, also ATA-190s for analog bridge.

The MR34 and MR52 access points that I have were stable throughout the issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No issues with Avaya IP-phones.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I had to call support by phone to get someone to move the case forward. The phone tech said that he noticed 97% cpu usage on my core switch. I wish they expose that info in the portal, all I can see as far as health for the switch is a nice green "Healthy", meanwhile CPU is at 97% and ports are flapping in the logs. He thinks the CPU might be causing ports to go down and then generating the UDLD and STP alerts. He asked me to update to the latest (11.30) which I did this morning, but still see the same flapping in the logs, and I am waiting to a support tech to tell me what the CPU looks like now. He also cannot explain what he is seeing and thought it might be a performance bug (hence he asked me to upgrade).

To clarify, in the logs the ports that are flapping are the 16 ports that connect 3 switches to my root switch and they are only alerting from the perspective of the other 3 switches, the root switch sees no issues with the his end of those 16 connections (its own 16 ports). There are another 8 ports that connect 2 of those switches to the secondary root switch, but those are disabled (correctly) by STP and are not seen flapping in the logs. These ports are aggregated what I usually see in the logs is that a few of the individual ports alarm about becoming unidirectional (alert by UDLD, no enforcement, means BPDU packets only going in one direction), and then back to bidirectional right away. Then I see STP cycle the entire aggregate port between root, alternate or disabled. This is constantly happening on the logs, but I only see a measurable outage when lots of UDLD alerts appear together.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

^

I'd be interesting in seeing if that high CPU continues, and that does seem like it could cause the port flap issue.

I wish we could see that in the dashboard.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

According to the tech CPU was still high so he disabled some DNS inspection:

"I did notice a spike in the CPU after the update as well. I have disabled DNS and MDNS inspection on the core switch " SW1A "since we had noticed an unusual amount of DNS traffic during the pcaps taken on the switch."

I am seeing less of the UDLD alerts, but the 3-4 alerts from the last few days have caused network blips and alerts to come out at the same time. I do still see a ton of STP alerts about it changing the aggregate ports that interconnect the 4 switches, something like 4-25 times per hour with no alerts about network issues.

I also noticed that there is already a new version 11.31 thought it doesnt list this particular issue, here are the release notes:

Alerts

- MS210/225/250 switches cannot forward IPv6 Router Advertisement and Neighbor Solicitation packets when flood unknown multicast is disabled (has always been true)

- MS 11.31 is identical to MS 11.30 for all models EXCEPT the MS390

Additions

- Support for MS125, MS355, and MS450 Series switches

- Support for MS390 Series Switches

- Support port bounce AVP for RADIUS CoA (Cisco:Avpair="subscriber:command=bounce-host-port")

- Support MLD Snooping (currently enabled/disabled based on IGMP Snooping configuration)

- Event logging for errors with ECMP routes

- Support use of alternate interface to source DHCP Relay, RADIUS, SNMP and Syslog traffic

- Improve stability during high inbound PIM Register load on MS350/355/410/425/450 series switches

- STP BPDU inter-arrival monitoring

- CoPP hardening for DNS, MDNS snooping on MS210/225/250/350/355/410/420/425/450 series switches

Bug fixes

- MS390 switches do not support port mirroring

Known issues

- MS390 series switches Max MTU is limited to 9198 bytes

- MS390 series switches do not display stackport connectivity status in dashboard

- MS390 series switches will not display packet details in the DHCP Servers page

- MS390 series switches do not support the 'next-server' or 'bootfile' parameters in DHCP messages

- Rebooting a single switch in an MS390 stack will reboot the entire stack

- LLDP frames from MS390 series switches will use a single system name instead of the Dashboard given name

- MS390 series switches will not display the advertising router ID for OSPF

- MS390 series switches with OSPF enabled will default to an IP MTU of 1500 bytes on every OSPF enabled interface

- MS390 series switches do not support the multicast routing livetool

- Stacked MS390 series switches do not report traffic/client analytics correctly in all cases

- MS390 series switches do not support tagged traffic bypass for voice on ports with a Multi-Auth access policy

- Dynamic VLAN assignment from a RADIUS server on MS390 series switches must already be an allowed VLAN on the port for it to function

- MS390 series switches may not display link state on links at mGig speeds (2.5G, 5G)

- MS390 series switches do not currently support the following features: VRRP, SM Sentry, Syslog server, SNMP, Traceroute, IPv6 connectivity to dashboard, Meraki Auth, URL Redirection, MAC Whitelisting, RADIUS testing, RADIus Accounting Change of Authorization, QoS, Power Supply State, PoE power status/usage,

- ARP entry on L3 switch can expire despite still being in use (predates MS 10.x)

- SM Sentry does not support Windows based clients (predates MS 10.x)

- MS425 series switches do not forward frames larger than 9416 bytes (predates MS 10.x)

- MS350-24X and MS355 series switches do not negotiate UPoE over LLDP correctly (predates MS 10.x)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you updated to 11.31? Have you noticed any change if so?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Any chance you have found a solution with Meraki yet? We seem to be having the same issue within our organization as well. Meraki Support is telling us we have bad cables, but we have already ruled that out as a possibility.

Our next step is going to be replacing it with a regular Cisco switch and see if the problem persist.

----------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did update to 11.31 this morning, but the STP flapping continues. Noticed this upgrade did not reboot the switches, and did not show a progress bar on the local GUI of each switch as it usually does. Also, nothing on the logs about the firmware upgrade. I really wish Meraki would make the logs more useful.

Meraki tech suggested the follwing

- Disable bonded nics in our Cistrix xenservers. We had about 4 hosts with bonded nics (active-active) going to multiple switches. This causes a VM's mac to flap quickly between those nics when the host rebalances the traffic. It pins mac address hashes to each nix so that each VM is always on the same nic, but if traffic is too much on one nic, it rebalances all connections every 30 mins. I dont see why this would cause STP flapping since none of these VMs or hosts are talking STP and the ports that are STP-flapping are not any of these ports where the bonded nics were. But Citrix does warn that bonded nics should go to the same switch because some switches cannot tolerate the mac flapping during rebalancing and so I changed the bond type to active-passive, where only 1 nic is used, and I disabled the switch ports for all but 1 nic on each of those servers. The STP flapping continued, though I dont see anymore UDLD alerts (for the last 3 days).

- Re-created the aggregate groups. The 4 switches I have are not stacked, they are connected to each other with 4-8 cables each which are aggregated. Those aggregate ports are what I see being flapped by STP currently about every hr or so. The tech wants me to de-aggregate the groups and aggregate them again. I have not tried this yet.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Matttt -.-

We still see lots of these ..

And we also tried the new switch sw, but reverted, since it did no good in the matter.

It seems that uplinks/trunks and workstation links are affected and not selective 😉

All switch-types are affected.

It bursts 10-15 entries in the log in each occurance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have got MS220 and MS225 switches with MS11.31 software. No uplinks are affected (to Cisco "classic" and Maraki). Only HP-inkjets, Apple computers and Dell notebooks with dockingstations are affected.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Agree..

Seems "workstations" only ..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is the response we received when I was having the issue back in the Summer.The issue eventually stopped without me making any changes to our network or the firmware. Seems to me they have yet to find the bug that's causing all the problems everyone is experiencing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jumping in here.

I've got a similar issue.

I have 2 MS350-48FP where this is happening on any port with a specific Polycom Group Series 500 codec connected to it.

I have a number of other P GS500s connected on these two switches and others that are not suffering the same issue.

LLDP is not enabled on the devices.

They are not POE (I've tried disabling POE).

I've got a ticket open with Polycom and they are looking into it but I'm not sure what the common denominator is yet.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a very similar issue. I have a MS220 (Non-POE) with a MR42 using an external POE injector. The exact same problem is occurring and its driving me crazy. The AP will start to reboot randomly and when I check the event logs I see:

| Port status change | port: 7, old: down, new: 1Gfdx |

| Port STP change | Port 7 designated→disabled |

| Port status change | port: 7, old: 1Gfdx, new: down |

Extremely frustrating.

AP Firmware is 26.6.1

Switch Firmware is 11.31

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We manage a lot of Meraki Networks and I see this in Every network. It doesn't seem to make any negative impact, just annoying as heck. However, you folks are saying they randomly reboot, which I've not seen on any abnormal basis beyond firmware upgrades and a forced restart to beacon freeze.

Now the MR16's have a known issue of beaconing freeze, which I have been told a fix was being written, but months have past now and the product is EOL. So I don't expect this is really going to happen. They just want you to replace the older AP's

The MR18's I've seen have a similar issue with the beacon freeze as well, but it's not considered a bug???

The MR32/33's processor gets a little over-joyed with any real density impacts.

The MR42's have been very stable for us.

The MR52's is a great AP, no issue

The MR53's have had pretty serious POE issues for us. Almost all of those have been replaced with 52's. The 53's will have POE PS overheating issues in the AP. They randomly shut down due to the thermals and eventually come come back online when they've cooled down.

Outdoor AP's, no issues.. Well other than the Metric Weatherproof thread connections... If you need to run flex to them, order from europe online because you're not going to find metric flex fittings around here.

I'm always happy to hear about bug fixes, so keep up the board posts

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your feedback BNS. Really interesting to read. We also have issues where our MR32's are placed, slow internet or not responding. Meraki will not admit it's a problem with the MR32, but have offered us a "cheap" upgrade to the MR52.

Places where we have MR52's do not have issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We discovered that it was only the MR70's (running 26.0 software) that had the issue..

All MR's are now at 26.6.1 and no noice in the logs .. 😀

Remaining "noicers" are only workstations as others see in this forum ..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I should clarify, this actually drops the codec off the network.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Any traction on this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@LeeVGIt depends 😀

We saw this quite a lot, but have now upgradet switch and AP software.

Not the entries only appear in association with workstations/cabled endpoint devices like printers or legacy ethernet equipment ..

We no longer regard it as an issue, since we see/receives no transmission problem tickets originating from these devices/ports.

If the guys in this forum with the cameras/video-streams gets this on their device ports, I can see the problem ..

Would love to hear if that is the case, since we are brewing on some streaming stuff 😁

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Update - I now have a ticket open with Meraki support as this is affecting more ports and devices.

None of the devices currently affected are POE.

Only the Codec are actually switching to down on the switch.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What happened to me:

after planned power maintenance more than ten Meraki MS220-8P switches (firmware 11.20) within the same network never came back in terms of management. The whole network was unstable. We have mostly Meraki but also Cisco Small Business there. In order to stabilize the network I had to power off of of stack member (Mearki MS410-16). We are running L3 on this stack. After this action we could finally access Cisco SB via SSH. Meraki MS220-8P switches are red in Meraki dashboard but seem to work as switches but every 5 minutes devices connected to them facing 20-60% percent of packet loss. Powering them down and up didn't help. Meraki advise: perform a factory reset on all MS220-8P switches.

Topology: 12xMS220---2xMS410---CiscoSmallBusiness---WAN-Router

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello

i have the same problem (only on one port)

I'm trying to switch from Port speed AUTO to a fixed speed

Is only a test

Best regards

Christian

LOG

| Sep 29 11:42:52 | Port STP change | Port 24 disabled→designated | |

| Sep 29 11:42:52 | Port status change | port: 24, old: down, new: 1Gfdx | |

| Sep 29 11:42:48 | Port STP change | Port 24 designated→disabled | |

| Sep 29 11:42:48 | Port status change | port: 24, old: 1Gfdx, new: down | |

| Sep 29 11:20:43 | Port STP change | Port 24 disabled→designated | |

| Sep 29 11:20:43 | Port status change | port: 24, old: down, new: 1Gfdx | |

| Sep 29 11:19:33 | Port STP change | Port 24 designated→disabled | |

| Sep 29 11:19:33 | Port status change | port: 24, old: 1Gfdx, new: down | |

| Sep 27 23:24:40 | Port STP change | Port 24 disabled→designated | |

| Sep 27 23:24:40 | Port status change | port: 24, old: down, new: 1Gfdx | |

| Sep 27 23:23:29 | Port STP change | Port 24 designated→disabled | |

| Sep 27 23:23:29 | Port status change | port: 24, old: 1Gfdx, new: down |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did anyone ever get a permanent solution to this?

I'm chasing a wild turkey type of issue and keep seeing things like this in the logs of many of our switches (MS225-48FP [firmware 12.28]) I'm having a hard time not blaming the switch....our issue is related to a Mitel phone system and Mitel brand phones.

| Jan 19 12:46:40 | Port status change | port: 2, old: down, new: 1Gfdx | |

| Jan 19 12:46:34 | Port status change | port: 2, old: 10fdx, new: down | |

| Jan 19 12:46:29 | Port status change | port: 2, old: down, new: 10fdx | |

| Jan 19 12:46:26 | Port status change | port: 2, old: 1Gfdx, new: down |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would be curious if there was a fix also. Our MS350-48 seems to only do it on 3 ports. The odd thing is, that one of them has nothing plugged in to it. One just enable/disables for a couple seconds at bursts through through out the day/night. The other is enabling/disabling, but it is also at times coming up at 1GFDX or 10FDX. I changed its network cable and see what happens. I am leaning toward a network jack power management, but we have about 20 of the same systems with the same setups, and only two of them have issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks like the last post about this was in 2021 but it's still happening to us in 2022. We use Avaya VOIP phones with PoE, and occasionally they'll just "die" and start boot-looping. If I plug a (working) spare phone into the same port, it starts bootlooping too. If we leave a "dead" phone for long enough (like, days) before plugging it back into the same port or a different one, it starts working again. So the pattern seems something like this:

- port freaks out

- phone boot loops

- port stays freaking out for hours or days, "bricking" any phones that come in contact, so that the same phone can't be used anywhere on the network

- after some hours or days of being inactive, all affected switch ports and phones recover and work normally again

I've tried a ton of different settings on the phones, on switch ports, on the IP phone system... to no effect. I expected it was something related to STP so that was the first thing I turned off, but it didn't care about that. I'll keep trying things over time, but so far the only solution is to put everything in quarantine for a couple days whenever it happens.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can only suggest making a support case with Meraki, or did you already?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@sas-brockett @Anonymous

Well .. we see it A LOT also.. and I was hoping, that people would share any info/experience they have had (ie with Support cases) or any remedy to help ..

We have feeling that ports serving ie phones or in-device-switching/porting like dock's tend to do this, but would like to hear other oppinions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That seems to be right. Our phones can Daisy chain an extra Ethernet port, making them effectively a 2-port switch. It would make perfect sense if someone put the same cable in both ends and created a loop that way, but that’s not happening.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We use IP phones with a 2 port switch in, but they are Cisco ones and we don't get any issues with them. We also use a lot of docking stations now and they are okay. What firmwares are you running?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi 🙂

Docks: It is a merry mix of Lenovo and Dell dockings with different firmware..

Switches: 14.33.1

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Seems like I'm having similar issue. My firmware is MS15.21.1. Would anyone know if this is still an ongoing issue.

Below is the error I'm getting:

| 9/19/23 16:42 | Spanning Tree | Port RSTP role change | Port 23 designated→disabled | |

| 9/19/23 16:42 | Switch port | Port status change | port: 23, old: 1Gfdx, new: down | |

| 9/19/23 16:42 | Switch port | Port cycle | ports: 23, downtime: 5 | |

| 9/19/23 16:42 | Switch port | Cable test | ports: 23 | |

| 9/19/23 16:31 | Spanning Tree | Port RSTP role change | Port 23 disabled→designated | |

| 9/19/23 16:31 | Switch port | Port status change | port: 23, old: down, new: 1Gfdx | |

| 9/19/23 16:31 | Spanning Tree | Port RSTP role change | Port 23 designated→disabled | |

| 9/19/23 16:31 | Switch port | Port status change | port: 23, old: 1Gfdx, new: down | |

| 9/19/23 16:31 | Spanning Tree | Port RSTP role change | Port 23 disabled→designated | |

| 9/19/23 16:31 | Switch port | Port status change | port: 23, old: down, new: 1Gfdx | |

| 9/19/23 16:30 | Switch port | Port cycle | ports: 23, downtime: 5 | |

| 9/19/23 15:20 | Spanning Tree | Port RSTP role change | Port 23 designated→disabled | |

| 9/19/23 15:20 | Switch port | Port status change | port: 23, old: 100fdx, new: down | |

| 9/19/23 15:20 | Spanning Tree | Port RSTP role change | Port 23 disabled→designated |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's still on .. We see it on various switch models and software versions, included the recommended ones ..

What we seem to conclude is, that most often it is yielded by workstations/docks or printers ..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Seems like I'm having similar issue. My firmware is MS16.9. Is there any solution for this problem now, this problem seems to have been going on for a long time but still no one or Vendor Cisco Meraki has been able to find a solution for the problem

Below is the error I'm getting:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Funny thing, that this stil persists across all the switching SW trails ..