Get answers from our community of experts in record time.

Join now- Technical Forums

- :

- Security & SD-WAN

- :

- Firewall Simultaneous Connections

Firewall Simultaneous Connections

Solved- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Firewall Simultaneous Connections

We had an issue today where an Airprint server (Presto) spawned like 400 processes on a server. Each process, apparently, reaches out to Collobos for telemetry purposes / licensing / who knows what.

Over the past 24 hours, only around 4GB has been sent to collobos, but Meraki reports about 1,200,000 flows to collobos. I've never seen anything even close to that.

The impact on network throughput was negligible, but what alerted us to the issue was that we started seeing random packet loss and high latency. I'm assuming this is because we were hitting the "simultaneous connection" limit on the MX84.

I'm trying to answer three questions:

- How can I see how many connections the firewall is servicing? (the actual device utilization on the summary report was less than 25%)

- Is there a way that I can be alerted when the performance of the MX is suffering?

- How does a "flow" equate to a "connection" (in other words, is there a relationship)?

Thanks!

Solved! Go to solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Guys,

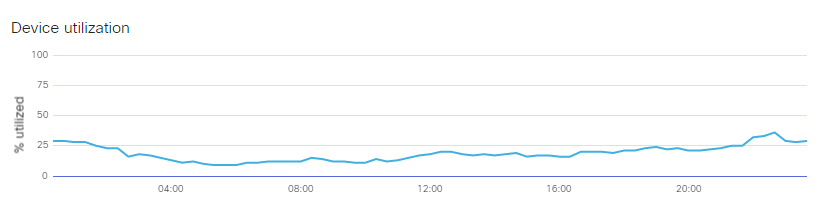

I realize this isn't exactly what you're after, but it's something anyway. Meraki added "Utilization" data to the Summary Reports page for MXes. The way I understand it is it's basically a composite metric of CPU and memory. It looks like so:

It looks like this data is also available via the API is you wanted to create a little utilization grapher script...

https://documentation.meraki.com/MX-Z/Monitoring_and_Reporting/Load_Monitoring

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've asked a similar question and only support has visibility into the MX CPU utilization etc. Seems like an obvious thing to include in the dashboard with device uptime etc...

Regarding the connections, the only real thing they've ever pointed me to is the number of clients. Although that is a crude estimate of the amount of traffic since one client could be generating millions of requests an another could be silent.

Summary, we have little to no visibility into how hard the MX is working. At one of our sites, I had to do a trial of an MX100 to finally diagnose that the MX84 was overtaxed.

If this was helpful click the Kudo button below

If my reply solved your issue, please mark it as a solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Adam, thanks. So support wasn't even able to provide input on your MX84 "overtaxed" issue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@lpopejoy wrote:@Adam, thanks. So support wasn't even able to provide input on your MX84 "overtaxed" issue?

Sadly not really. They keyed in on the fact that our total clients were well over what the MX84 was rated for. We were getting occasional spikes in latency, random dropped pings, and choppiness on our Cisco VOIP calls. We spent a lot of time troubleshooting the circuit and figured it was worth the effort to test/try the larger model MX. And magically all the issues went away. A little visibility into the device CPU utilization etc would have made the whole process much easier to troubleshoot.

If this was helpful click the Kudo button below

If my reply solved your issue, please mark it as a solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That's pretty frustrating. I'm hopeful that this feature in 14.29 will give more visibility at some point:

New features

- Added firmware support for more detailed device utilization reporting. This is not yet available via the Dashboard UI

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Guys,

I realize this isn't exactly what you're after, but it's something anyway. Meraki added "Utilization" data to the Summary Reports page for MXes. The way I understand it is it's basically a composite metric of CPU and memory. It looks like so:

It looks like this data is also available via the API is you wanted to create a little utilization grapher script...

https://documentation.meraki.com/MX-Z/Monitoring_and_Reporting/Load_Monitoring

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interesting, I didn't know that was available via API. I guess that must be the same value that is inside the dashboard as "device utilization" that you referred to.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

...and apparently there is some consideration here of traffic. According to the API doc you referenced it says: "Currently, the load value is calculated based upon the CPU utilization of the MX and it's traffic load. "

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That summary report would be most useful for environments experiencing a consistently high load or long durations of a load so definetly worth looking at. In my case, we had intermittent spikes which otherwise wouldn't show up in that style of averaged report. Although possibly capturable via the API with a shorter timeframe? Maybe if they gave the ability for the timeframe to be dropped from 1 day to smaller timeframes like 1 hour etc it could become more interesting.

If this was helpful click the Kudo button below

If my reply solved your issue, please mark it as a solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I thought I could maybe be clever and and trick the dashboard into showing me just one hour by manipulating the URL string to just have a date range of one hour.

t0=1529971200&t1=1529971260

That's at the end of the URL, and it's just Unix time for start and end. But, Meraki saw me coming on this one and all I'm getting is spinning wheels 😞

Oh well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The documentation here (https://documentation.meraki.com/MX-Z/Monitoring_and_Reporting/Load_Monitoring) says that the value retrieved from the API will be averaged across the past 60 seconds. That's close enough to give pretty good information.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah totally. 60 seconds is very reasonable. Just have to write something to grab it ans display it.

That might be a great little exercise for me right now since I'm trying to learn some Python. If I do do it I'll post back here. No promises though 😛

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Haha, I hear you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would be interested in what you create even if you consider it a hack job.

Also regarding Phython - I found this site good in helping me learn another language and looking for an IDE for it.

Here is a link I found when I was looking for an IDE for Phython.

http://knuth.luther.edu/~leekent/IntroToComputing/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

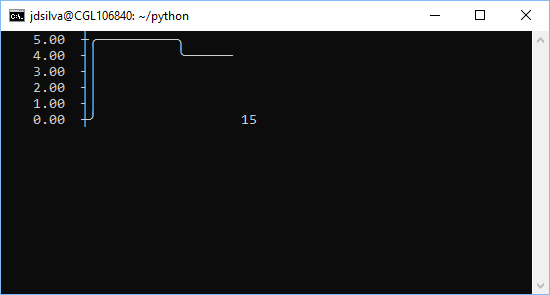

@Abuse_Tracker Don't say I didn't warn you... 😛

Super simple CLI MX load grapher. This requires the asciichartpy and meraki modules (you can install both via pip). It'll run for 60 minutes, pulling the load value once a minute and adding it to the graph.

#!/usr/bin/python3

from curses import wrapper

from asciichartpy import plot

from meraki import meraki

import time

print("Hello and welcome to the MX Utilization Grapher Utility - Curses Edition")

api_key = input("Please enter your API key: ")

network_id = input("Please enter the Network ID for the MX: ")

serial_number = input("Please enter the serial number of the MX: ")

mx_perf = [0]

def main(stdscr):

stdscr.addstr(0, 0, "Initializing.")

stdscr.refresh()

for each in range(0, 3):

perf_score = meraki.getmxperf(api_key, network_id, serial_number, suppressprint=True)

mx_perf.append(perf_score['perfScore'])

time.sleep(1)

stdscr.addstr(".")

stdscr.refresh()

for each in range(1, 60): # Change 60 to however long, in minutes you want to run this

perf_score = meraki.getmxperf(api_key, network_id, serial_number, suppressprint=True)

mx_perf.append(perf_score['perfScore'])

stdscr.clear()

stdscr.addstr(0, 0, plot(mx_perf))

# display a counter to show how many minutes the graph has been running

stdscr.addstr(str(each))

stdscr.refresh()

# control how wide the displayed graph is

if len(mx_perf) > 60:

mx_perf.pop(1)

# Sleep for 60 seconds

# Perf Score is only updated once a minute anyway

time.sleep(60)

wrapper(main)

-

3rd Party VPN

170 -

ACLs

91 -

Auto VPN

297 -

AWS

36 -

Azure

68 -

Client VPN

380 -

Firewall

850 -

iOS

1 -

Other

549 -

Wireless LAN MR

1