Get answers from our community of experts in record time.

Join now- Technical Forums

- :

- Cloud Security & SD-WAN (vMX)

- :

- Re: Packet loss from Azure vMX to spokes

Packet loss from Azure vMX to spokes

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Packet loss from Azure vMX to spokes

I wonder if anyone else has run into this problem before.

I have a client using a vMX in Azure and they are reporting 4% packet loss to all the VMs in Azure from the spokes. It doesn't matter which VM is being pinged, and it doesn't matter which spoke the ping is being done from. Spokes can ping other spokes with zero packet loss.

The issue happens consistently 24x7. You do about 100 pings and you will loose about 4 of the packets. The packet loss tends to be spread out.

If you do a ping within the Meraki dashboard there is zero packet loss between the vMX and any spoke that I test. There are no interesting events in the event viewer, and the status bar for the vMX and spokes is solid green.

The VMs in Azure can ping the vMX with zero packet loss. The issue only happens when traffic is routed through the vMX to a remote spoke (or from a remote spoke being routed through the vMX to the Azure VMs).

The vMX and all MX's are running 14.39. I have tried rebooting the vMX and a small selection of MX's. I have also had a small number of the Windows VMs rebooted. The VMs are running different versions of Windows Server as well (so there is nothing in common).

Nothing has made a difference.

Any one had issues with small amounts of packet loss to VMs in Azure connected via a vMX?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @PhilipDAth

Not sure if you have resolved this yet, but out of curiosity, do you see the same pattern of packet loss with other type of traffic? such as telnet or TCP

Do the spokes have multiple uplinks? if so, create a SD-WAN policy to push traffic to vMX via a specific uplink. Or test from a spoke that has a single uplink and see if there's any difference.

Cheers,

-Alex

Please mark it as a solution if solved your issue so others can benefit from it 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, it is not resolved. I just did a packet capture on the VMX and it does not appear to be affecting TCP traffic. Only ICMP ping traffic.

All spokes have dual uplinks. The spokes are in more than one country and using more than one ISP.

We do have an SD-WAN policy applied.

To me it appears to be an issue with VMX running in Azure. If I promote some spokes to hubs, there is no loss between them. That is the only common point.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you have a support case opened already for this one, I would recommend continue working with the support team. Sounds like this one might involve collaboration between Meraki & Azure support.

If you can PM me the case info (please don't post it publicly here), I'll be happy to follow-up with the support team for you.

Cheers,

-Alex

Please mark it as a solution if solved your issue so others can benefit from it 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>If you have a support case opened already for this one,

I did have a support case open. We can ping between the public IP address of the VMX and the public IP address on the spokes with no packet loss. We can ping between VMX to the spokes over AutoVPN with no packet loss.

After that the support person said it is not a Meraki issue and closed the case.

I'll probably let it rest now. I was just wondering if others were having an issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If it becomes a bigger issue (e.g if it affects more than just ICMP traffic), I'd say reopen the support case and go from there.

Cheers,

-Alex

Please mark it as a solution if solved your issue so others can benefit from it 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

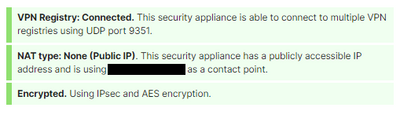

I am seeing the same results in our environment. Just to add a bit more doing testing off our network when I ping the WAN IP of the vMX in Azure I see the same packet loss. Usually when I am testing a continuous ping from my computer it will range from 3 - 9%.

I had a case open with Meraki but I was told its just a VM and it looks setup correctly open a case with MS. MS initial support wants to get logs from the Meraki as they dont see a problem with their side. Me, I just want it to work so we can start moving production servers into the environment.

I have an instance in US and Australia - Azure that are experiencing the exact same behavior.

To add a bit more here when im on the vMX and ping my other MX devices no packet loss, when i ping external addresses i have no packet loss. Its only inbound to the vMX where I see the packet loss.

Would love some help if others have seen this and resolved. I have tried latest 14.x and 15.x code to see what i can do. I did notice in Azure market there is a v2 and v3 of the meraki device but cant really find any documents on the key difference or if I should choose one vs the other.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When I have ran into this problem (not often) the only way I have found to fix it is to delete and re-deploy the VMX. When it works it is reliable. When it is broken it stays broken.

Meraki and Azure support are no help in this area.

I have done lots of packet captures (using Meraki's own packet capture tool) for Meraki support showing packets coming in but not leaving the vMX.

On a seperate note, I have also had issues with running StrongSwan on Ubuntu in Amazon AWS on t2.* instances when using AES128 encryption. Same issue - it either works or their is a constant low level of packet loss. In Amazon if I stop/start the instance (so it goes onto different physical hardware) it works again.

I spent quite a bit of time with Amazon support on this issue, and it looks like some of the Intel chips have a microcode issue (lets call it a microcode bug). It might be related to all the issues Intel ran into with the security vulnerabilities, and solving one thing and breaking another.

With StrongSwan if I run on a different instance size everything works. If I use something other than AES128 it also works.

AutoVPN also uses AES128.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So when working with MS support and using the PSPing tool when i do the ping test to the WAN side of the Meraki using TCP instead of ICMP normal ping I dont have packet loss. Reading through this board it would appear you had the same, is that true? Also with just having ICMP drops did you find that your applications behind the meraki had issues or should I just not worry about it?

I will go and try to do the redeploy again but I know I have done that once or twice while trying to fix routing issue.

I noticed when deploying in MS portal there was an option for v2 and v3 we chose v3 but curious if you know anything the two different versions and which one is best to choose in Azure. I cant find much detail on the difference between the two.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I experienced traffic loss of all traffic types. The remote sites connecting to this VMX had dual Internet links so you could look at the SD-WAN graphs in the Meraki Dashboard - and those graphs also showed the same packet loss.

I've only used the v3 CPU option. It's cheaper and faster.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you ever do anything to get this working without packet loss. We are trying to move work loads into Azure, however with the packet loss it scares us. We have moved a couple servers out there, the people that RDP to the server experience drops and reconnects.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have only come across two scenarios. Either it loses packets or it does not.

If you have the case where it has packet loss I have only found way way to fix it. Delete the VMX and redeploy. This usually fixes it permanently.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for bringing this up.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I know this an an old thread, but looking around this is exactly my issue and its July 2021 and still don't see any definitive answers. I'd hate to have to tear down this vMX and set back up, but before I do, just seeing if anyone here in 2021 had and/or having this issue and if any resolve?

I have two vMX's (Mediums) for two different regions running about five to ten VM's in Azure connecting as hubs since requirement to have multi-site connectivity for RD's and such. Anyway, on vMX in UK works fine and no issues, the other one here in US on east coast has same issue with dropped packets as described here.

I know it's the vMX, if I PSPing to multiple IP's both Public and Private I lose the packets at the vMX IP and all servers behind that which show same time packet lose as I worked my way back out from the vnet subnets yet no drops on the MS Public IP.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We are having that same issue in US East but not North Central. @CMTech1 , I redeployed but no luck. FYI don't upgrade the public IP sku, I broke our env by doing that. I have packet loss within the same vnet from a prod server to the meraki internal interface (different subnet) and also over the meraki via thrird party vpn. I saw a thread on reggid that mentioned removing the route table from the meraki subent fixed the packet loss. I'm going to try it and see what happens off hours.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Agio-Networks; As of today we're still seeing the random drops. We see the issue to the three locations we have, US East 2, US West and UK South so if I had to point a finger, though really don't want to, I'd say it's a Meraki issue. Anyway, we have an open/ongoing case with Meraki and the most recent change they made (we don't have access to do) was change the default MTU from 1500 to 1420 to allow more room for the Auto VPN header is what they stated. However, at this time after another week of monitoring it seems we are still dropping random packets so that didn't resolve the issue and have to reply back with the bad news. If I ever do get a resolve, I'll be sure to post it here. Just surprised others haven't seen this in their network monitors.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I had the same issue: consistent packet loss (normally 1% - 2%, but sometimes spiking to 35%+) on both of my Azure VMXs in 2 different regions. When I placed a high load on the VMX's to/from Azure, the AutoVPN would drop. I tried everything noted above to no avail. I finally called Meraki Support today and spoke to Sanket who did a great job working this issue with me. He found a recent internal support article related to this issue. The VMX setup guide failed to mention this configuration item and has since been updated as of October 2021.

To fix the issue, login to your Azure portal and open your Route Table(s). Select Subnets and disassociate the subnet that both the Azure Router and VMX Router share. In my case, it was subnet 10.100.0.0/24. Azure default gateway was 10.100.0.1 and the VMX was 10.100.0.4. I'm assuming the two were fighting over the traffic and causing the packet loss.

After disassociating the subnet, the packet loss stopped, performance noticeably improved, and the AutoVPN drops stopped. I didn't experience any routing or connectivity issues. No downtime was incurred either when I applied the disassociation in Azure.

I hope this helps anyone who's been troubleshooting this for months like me.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you CIO1!

We had this exact same issue. We were dropping packets, had very slow file transfers and ping responses in the 800-1000ms range.

We removed the subnet the vMX used in Azure from the Azure routing table and it fixed our issue immediately without an outage. 🤞 that it stays that way. CIO1, thank you for sharing!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @CML_Todd ,

But how does vMX appliance know where to send the packets without a route table associated to it ?

I have the following scenarion, my vMX appliance is running as VPN concentrator mode as a HUB where it has established many S2S VPN connections with other spokes. The HUB is advertising some networks which then need to be sent to a NVA appliance in Azure (being in the same subnet as vMX appliance) for further filtering and so on. How does vMX appliance without a route table associated to its subnet know how to send these packets to the NVA ? Note that I am unable to set up static routes manually in vMX since it is running in a VPN concentrator mode. I tried removing the route table and the connection from spokes to Azure resources broke down.

If you can reply I would really apprciate it.

Thank you in advance !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi CIO1

But how does vMX appliance know where to send the packets without a route table associated to it ?

I have the following scenarion, my vMX appliance is running as VPN concentrator mode as a HUB where it has established many S2S VPN connections with other spokes. The HUB is advertising some networks which then need to be sent to a NVA appliance in Azure (being in the same subnet as vMX appliance) for further filtering and so on. How does vMX appliance without a route table associated to its subnet know how to send these packets to the NVA ? Note that I am unable to set up static routes manually in vMX since it is running in a VPN concentrator mode. I tried removing the route table and the connection from spokes to Azure resources broke down.

If you can reply I would really apprciate it.

Thank you in advance !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This was 100% our issue. Disassociating the Meraki Management subnet from the route table was an instant fix and there was no interruption to our traffic. Thank you, Thank you, Thank you!!! I have been fighting this packet loss for months. I had tickets with Meraki and Azure and nobody could help us.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

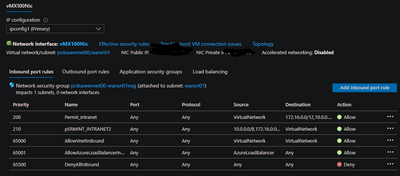

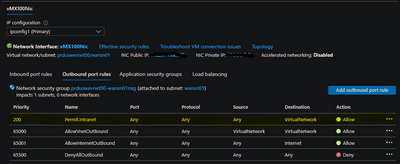

Well I figured out what worked for us and the disasociate the subnet is key to it. First we had to add this rule to our inbound port rules

Then Add this rule to our outbound rules

Then we did the disassiocate

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Meraki has a fix for this. It's related to their auto VPN tunnel connections. They change one of the two registration connections to the new systems first, then let it run for a couple days and then do the other one and viola! Call them up and say you have issues with the S2S VPN with constant packet drops and request review of the VPN registration. Once they changed, no more issues.

Side note....Anyone using the Meraki Cisco AnyConnect and have the same issue where it drops a few times after initially connecting ask support to review the VPN Client MTU's. We had them adjust the MTU's for this and no further issues with users now either.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am *very* interested in this @CMTech1 - could you give me a ticket number I could reference?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Due to the confidential info related to our company I really can't provide the ticket number, however as I mentioned, call Meraki and mention exactly what I stated. It took me calling and working with five different techs until one was like yeah, I know how to fix that and thankfully did.

The tech was Patrick Baah and below is an except of the message when he updated the second registration;

Hi xxxx,

I have updated the second registry. Good to see the improved state of the registry connection. I'm glad I could assist. If there are ever any questions or concerns that you have please do not hesitate to reach out to Meraki Support!

Best, Patrick Baah Cisco Meraki Technical Support

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can confirm vMX in Azure, the WAN interface and Anyconnect have separate MTUs. With Meraki support making the changes, walked the Anyconnect server MTU down from default to 1335 to get a stable connection.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cool! We've had solid connections both IPSec VPN's and Client VPN w/Cisco AnyConnect (current v5.0.0.3) since last August 2022 when posted they updated our MTU's so glad you're good too! Cheers!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

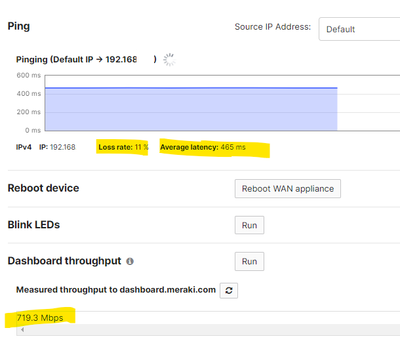

we are facing an issue where we are noticing high latency from on-premise hub-A to vmx however another hub-B (in same region/country as hub-B) is having ideal latency. intermittently, this behaviour flips from Hub-A to Hub-B.

vmx is deployed in separate subnet and routing table properly attached to hosts subnet. traffic / ping flow is normal and working with high latency to one hub-at-a-time. something telling me hosts behind on-premise hub LAN are routed via second Hub instead of direct Hub-A to vMx or Hub-B to vmX.

tried everything including redeploying vmx, rasied case with Meraki support but no luck!

any help would be appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If one hub is working great and the other isn't - that suggests a connectivity problem with the physical hub having that issue.

What does the uplink monitoring look like when it is having an issue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thx for replying. Uplink utilization is not showing any odd behavior. Dashboard throughput seems normal.

Meraki support did change the MTUs for vMX as well as Hub-MX units but it didn't resolve the issue.

I understand cross-regional latency should be kept in mind but below latency is more than 3 times higher than that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a very similar issue to the packet loss everyone is mentioning. Looking at the uplink data shows packet loss to 1.1.1.1 and 8.8.8.8 up to 60% and to internal IP at 100%. Like someone mentioned above when the increases the AutoVPN drops and we have other internal process that are dropping or failing.

This is a snapshot of our loss.

Any thoughts? AutoVPN is using UDP 9357 & 9350.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Just wondering, have tried to use your ISP's DNS for the monitoring rather than 1.1.1.1 or the 8.8.8.8 to see if you have same results with packet drop? I've seen similar issues due to ISP network causing high packet loss.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We've not used the ISP DNS for the vMX testing but we have used an IP that is internal and one in South Africa. No real change in the packet loss graph. While I'm typing this I did add a Lumen DNS IP and see the same loss.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'd then call the ISP and explain the issue and request a review of the circuit. Normally for such issues they'll ask for a maintenance window to do a deep packet inspection.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is an Azure deployed vMX, no real ISP to call. It's my HUB. We recently deployed a new one in a diff vnet and subnet, hoping this will clear everything up.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Got it. Either run PCAP capture or call Meraki support to gather and review. I did have one other weird issue with a vMX in Azure to where it was dropping the IPSec tunnel constantly. Ended up pushing a re-deploy - re-apply within Azure (think v-motion/migrate using Azure tool under HELP of VM) and that fixed my issue. Maybe try that in case a host cluster thing you have no control over. Just be mindful the re-depoy will reboot the VM.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Our cloud guys have deployed 3 vMX in 3 different subnets, we have seen the same problem in all three. We just built a new vNet and subnet and deployed a new vMX in it. We will be flipping all our sites to this new one tomorrow. Fingers crossed the issue will be cleared.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We finally have a solution. Final setup is as follows;

vMX in dedicated subnet off our hub VNet with no route table associated to the subnet and no NSG. This matches the latest Meraki install guide dated Jan-25.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This worked for us. Thanks for updating.

-

3rd Party VPN

1 -

Auto VPN

26 -

AWS

17 -

Azure

57 -

Client VPN

6 -

Cloud native firewall

2 -

Cloud networking

26 -

Firewall

5 -

Hybrid Cloud

6 -

Multi Cloud

3 -

Multi Region Cloud

4 -

Other

7 -

Quick Start

1 -

Virtual firewall

30