- Technical Forums

- :

- Switching

- :

- Re: Replacing Dell PowerConnect 6248 with MS250

Replacing Dell PowerConnect 6248 with MS250

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Replacing Dell PowerConnect 6248 with MS250

Greetings,

I'm in the middle of migrating from a SonicWALL NSA E5500 + Dell PowerConnect 6248 core to MX250 + MS250 core. My first attempt failed. Basically, I'm trying to replicate the current configm as it works and will allow for easier fallback, if necessary (already had to once). Anyhow, the main concern that I had upon my first attempt was that the MS250 didn't communicate with my virtual environment. I'm using a 10 Gb Fiber SFP+ Tranceiver (MA-SFP-10GB-SR) to communicate with the virtual environment. To make this work on the Dell, I have following related entries:

vlan 10,20,30,59-60,100,128,202-204,252-253

vlan routing 252

interface vlan 252

name "Virtual Environment"

routing

ip address 10.88.121.18 255.255.255.248

bandwidth 10000

ip ospf area 0.0.0.0

ip ospf cost 10

ip mtu 1500

exit

interface ethernet 1/xg3

channel-group 2 mode auto

description "10Gb_Uplink"

exit

interface port-channel 2

description "Virtual IaaS Etherchannel"

switchport mode trunk

switchport trunk allowed vlan add 10,59-60,202-204,252

exit

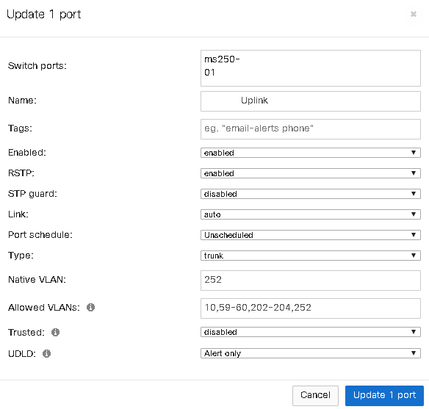

On the MS250, I have created the VLAN 252 and have assigned it to the SFP+ port with the 10G module in it. I then created the following:

Am I missing anything?

Also, I noticed that the port link speed can only be set to auto or 1Gb. How do I get it to be 10Gb?

Thanks for any assistance that you can provide.

Jeremy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did the MS250 come online in the Meraki Dashboard? It needs to do that to get its config.

It sounds like you only had a single port in your port-channel in the VM environment. It should still work not having a port-channel configured on the Meraki side, but just something to consider.

I notice if I try to manually configure the port speed I only get 1Gb/s options as well.

Did the link light come on for the 10Gbe SFP+? Is the server definately using 10Gb-SR as opposed to LRM or something else?

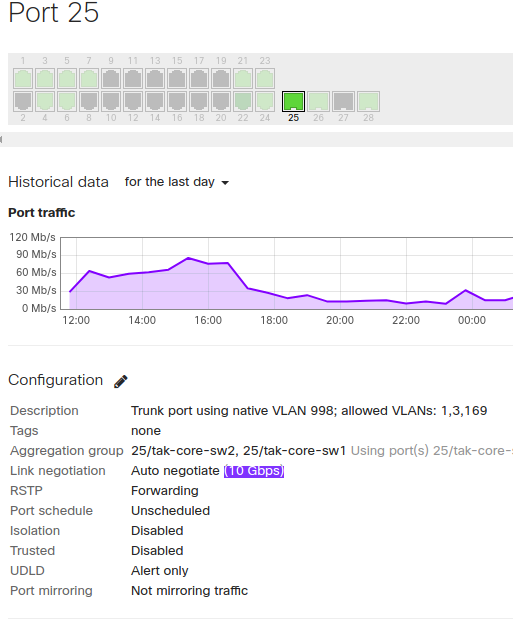

Below is an example of an MS250 that has a 10Gbe SFP+ plugged into port 25 going to a VMWare server environment. This is how it should look.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah, everything is in the Dashboard. I've worked with a lot of MS120 units, but they are all at remote endpoints, so the demand is much less.

Currently, we have a single port (with another in the warm spare), but it is quite possible that we'll add another port.

Your screenshot helped a lot, as it does actually show me that my switch was seeing their switch at 10Gb.

Usage 66.3 MB (66.1 MB sent, 175.2 KB received)

Traffic -

CDP/LLDP

dungarvin-mendota-iaascore1 (Juniper Networks, Inc. ex4600-40f Ethernet Switch, kernel JUNOS 14.1X53-D45.3, Build date: 2017-07-28 01:31:44 UTC Copyright (c) 1996-2017 Juniper Networks, Inc.) raw

SFP module OEM - 10GBASE-SR: 10.3 Gbps (Part MASFP10GBSR)

So, it looks like I'll need to check with the IaaS vendor to see what they were seeing.

In theory, it looks like I have everything configured correctly for this port/interface. I'll see what the vendor says. Thanks for the feedback!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just had a thought. Because you are plugging in a new "default gateway" (aka it is moving from the Dell to the Meraki) the MAC address of that gateway is changing.

Perhaps this might just require someone to clear the ARP table on the remote end.

Did you try doing some pings from the MS250 to the indivudual hosts during the last cut over? I guess you should do some pings now before the next cut over - to verify that the devices will respond to a ping.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have received information from the vendor supporting the virtual environment. One of the primary issues appears to be that the Juniper was configured to interact with an LACP bundle. As I was going with a single port initially, it failed. This did lead to a question that I had made an assumption on, but appear to be wrong. If I want true redundancy, would I do a warm spare or stack? Basically, if my primary has a critical failure, what is the best approach for a seamless recovery? It seams that I'd actually go with the warm spare, but I believe I need OSPF. That seems to suggest that I need a stack. But if I have a stack, how can I have seamless redundancy without having IP conflicts?

I would think that this also impacts licensing, as I got only a single Enterprise subscription, per sales vendor recommendation.

I'd appreciate any clarity that can be offered.

Thanks,

Jeremy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I often struggle with this decision, and usually end up going for a stack.

When using a warm spare switch you can not build a LACP channel across the two switches.

When using a stack you can build a LACP channel across the two switches.

When using a warm spare switch you can firmware upgrade one switch at a time, so at least one switch is always up.

When using a stack you can only upgrade the entire stack, and the entire stack will reboot - so a guaranteed outage.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Now that I have the primary MX250 and Primary MS250 working correctly in my environment, I'll be moving forward with redundancy in later Feb. While I understand that you can't build an LACP channel across the two switches, I would be able to duplicate it with a couple more SFP+ modules. My concern with a stack is IP conflicts and possible looping. I've already experienced issues with my MS120 switches having looping issues. I've been testing loop guard configs, but haven't implemented anything, as I didn't want to risk any loss of connectivity to my remote sites. That said, there have been a couple firmware releases since then, too.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I must admit, the MS120's had a bad start around spanning tree issues. This was mostly back in the 9.x firmware days. The 10.x code is pretty solid.

I would run with a stack as you network core.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As @PhilipDAth mentioned, have you verified whether the fiber connection is single-mode or multi-mode and whether or not you've got link lights? -Edit- Just saw your response posted a couple of minutes before this one.

Our experience is that the Dell PC switches tend to have connectivity problems when the SFP's aren't identical on both ends of the link. I don't recall ever trying the Meraki SFP's in a Dell, but they may work just fine.

Something else to consider about the Dell PC62xx's is that Trunk ports can't have a native VLAN, so any untagged traffic is dropped. From the looks of your setup, this shouldn't be a problem, but a quick packet capture would verify whether the traffic is being tagged correctly.

If you need to allow untagged traffic on the Dell, I believe you can change the port type to General and assign a PVID (same thing as a native VLAN on a Cisco Trunk port).

We've got a dormant PC6224F adjacent to an MS250, so could test the connectivity between them with our spare MA-SFP-10GB-SR's if that would be helpful.

Hope that helps 🙂