- Technical Forums

- :

- Security & SD-WAN

- :

- WAN Troubleshooting Ring Central Phone Provisioning (Long)

WAN Troubleshooting Ring Central Phone Provisioning (Long)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

WAN Troubleshooting Ring Central Phone Provisioning (Long)

This is more of a general WAN and TLS troubleshooting question than Meraki per se as I think we've mostly ruled out the Meraki MX67 as the cause. But I haven't run into this kind of issue in some time and perhaps an engineer will have a thought of the best way to approach this.

The problem report which started about a week ago was Polycom phones were failing to be provisioned at ring central's provisioning server pp.ringcentral.com 199.255.120.237. This was true for at least one branch off with a Meraki MX and possibly more. Also note that the MX could not ping the provisioning server where as most other sites could. All traffic is permitted outbound and there was no content filtering nor AMP blocking going on.

To make a long story short TAC suspected something in path between WAN1 of the MX and the provisioning server was causing the issue. To test I upgraded to v15.45 on the MX which gave the ability to select a network to source route via the tunnel to the data center/MX Hub. Once I sent the provision traffic (TCP 443 TLS) and it egressed the firewall and NATTed to the data center Ip block - then the phone could register no problem. And as the DC is close to the branch office this should cause no voice quality issues.

A MTR traceroute from the Comcast Business connected MX67 is below. The ping and traceroute failure could be a red herring or it could be an indicator of an issue such as an asymmetric pathing problem to the provisioning server. I am dreading involving Comcast on this as their folks give very basic support even on escalation. And Ring Central is also difficult to get to an actual engineer to look at the reverse path, look at the traffic etc. How would some of the engineers approach this issue? I took a look at RIPESTAT https://stat.ripe.net/widget/bgplay#w.resource=26447 in hopes of perhaps seeing some BGP path changes that could be relevant. But it seems to only look back two days. Any other tools I could use to troubleshoot this myself without involving Comcast or RC? Or if I do reach out to one of them - any tips to getting so someone who could really dig into the issue? Sending all the VOIP traffic over the tunnel isn't ideal. The last hop in the MTR is Comcast. https://mxtoolbox.com/SuperTool.aspx?action=arin%3a66.208.228.242&run=toolpage

| Packets | Pings | ||||||

| Host | Loss% | Snt | Last | Avg | Best | Wrst | StDev |

1. x.y.68.137 | 0 | 10 | 0.8 | 0.8 | 0.6 | 1.1 | 0.2 |

2. 68.87.200.49 | 0 | 10 | 0.7 | 0.7 | 0.7 | 0.9 | 0.1 |

3. 68.87.200.53 | 0 | 10 | 0.9 | 0.9 | 0.7 | 5.0 | 1.7 |

4. 162.151.18.133 | 0 | 10 | 0.9 | 0.9 | 0.8 | 11.7 | 3.6 |

5. 96.110.41.109 | 0 | 10 | 4.5 | 4.5 | 4.3 | 4.9 | 0.2 |

6. 96.110.46.42 | 0 | 10 | 4.3 | 4.3 | 4.0 | 4.7 | 0.2 |

7. 96.110.37.178 | 0 | 10 | 5.0 | 5.0 | 4.8 | 6.4 | 0.8 |

8. 68.86.88.18 | 0 | 10 | 4.5 | 4.4 | 4.2 | 4.5 | 0.2 |

9. 66.208.228.242 | 0 | 10 | 4.0 | 4.0 | 3.9 | 4.1 | 0.1 |

10. ??? | 100 | 10 | 0 | 0 | 0 | 0 | 0 |

11. ??? | 100 | 10 | 0 | 0 | 0 | 0 | 0 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What does your public IP look like on ping.pe? I recently had a Comcast issue that was new to me and may not be related to yours at all, but I had a customer with intermittent connectivity where things would work perfect and network tests would look great and then suddenly packet loss out of nowhere. Then a few minutes later fine again.

A long, painful story made short was that I discovered the people who manage and moved an old Sonicwall from one city to another left the static IP info from Comcast from the old location. It still sort of worked at the new city even though that service was provisioned DHCP and no static IP assigned. They kept telling the customer the firewall was old and couldn't handle the bandwidth (3 person law office with 150/25mpbs broadband). Of course, when I swapped in Meraki everything looked fine because I left DHCP on the WAN port. This seemed to prove they were right, but actually did not..

ping.pe helped me realize what was going on.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the tip on ping.pe - that tool is awesome and showed right away that 1/3 or so of all ISPs can't ping their provisioning servers while all can reach their www.ringcentral.com server.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good find. I hadn't thought about putting their server there. If you open a case with RingCentral on this let me know the case number and I will piggyback on it to maybe help get some visibility. I am a RingCentral reseller too.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

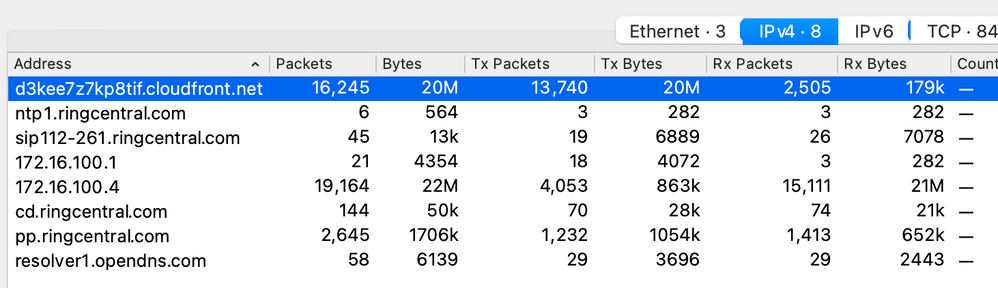

I happened to be provisioning a phone to RingCentral today and decided to run a packet capture. I would have expected the phone to only communicate with pp.ringcentral.com, but apparently it is communicating with cloudfront.net as well. Based on the size ~20M it seems that RingCentral uses cloud front as a CDN to deliver firmware.

I wonder if this explains the trouble you saw? I could imagine the CDN being blocked by content filtering and also could imagine this would only happen to a phone that did not run current firmware matching what RC wanted to see. Once a phone was updated and current after say taking it to another network at home and then coming back to the Meraki site it would register ok because it only needed to register and not download current firmware package.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Brilliant. I'll have a look.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

url https://media.p-n.io/..., server 99.84.203.69:443, category Questionable

I do see where cloudfront was getting blocked. But this was from a PC and not a phone.

I had been hopeful that traffic to google NS was the culprit. But alas not seeing it. But thank you for the idea!

| Content filtering blocked URL | url https://dns.google/..., server 8.8.8.8:443, category Proxy Avoidance and Anonymizers |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The rest of the story: On their own - phones began provisioning from all sites with no change on our LAN or firewalls. It is unclear if there was a change in peering between Comcast and its next hop or if it was change done by Ring Central. Pings and traceroute from Comcast to pp.ringcentral.com continued to fail. So that aspect appears to have been a red herring and not related to the solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good, but still a mystery I guess. I hate not knowing why, but sometimes that is all we get..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks a million for helping me think it through. Even when the solution is eventually totally out of your control you're having to prove it's not your stuff. 🙂 I thought not at the start as the Meraki MX had been in place for many months without any issue with RC Polycom provisioning. But still the natives were getting restless.

-

3rd Party VPN

170 -

ACLs

101 -

Auto VPN

316 -

AWS

39 -

Azure

71 -

Client VPN

431 -

Firewall

718 -

Other

594