We're migrating to the Cisco Community on March 29. The Meraki Community will enter read-only mode starting on March 26.

Learn more- Technical Forums

- :

- Security & SD-WAN

- :

- Azure: Using a Load Balancer to achieve high-availability and load-balancin...

Azure: Using a Load Balancer to achieve high-availability and load-balancing

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Azure: Using a Load Balancer to achieve high-availability and load-balancing

Hi,

I deployed 2 Meraki vMX in Azure following the official guide.

vMX Setup Guide for Microsoft Azure - Cisco Meraki

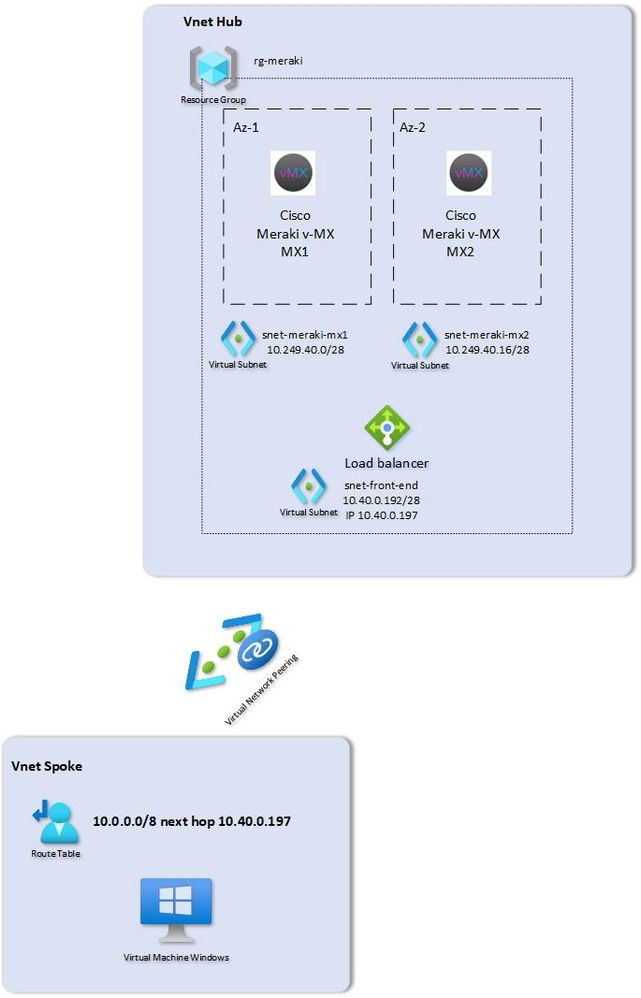

Each vMX is deployed in a different Azure availability zone. I use a hub and spoke model where the Merakis are deployed in the hub and the workloads in the spoke. There is a vnet peering between the hub and the spoke. A routing table is deployed in the spokes routing the traffic to the load-balancer which sits in front of the Merakis. Both Merakis advertise the same Azure IP address subnet ranges into the AutoVPN.

To achieve high-availability, there are 2 options I know off:

1) Implement an Azure function that tracks the health of the VMs and changes the routing table accordingly

Deploying Highly Available vMX in Azure - Cisco Meraki

2) Implement E-BGP

GitHub - MitchellGulledge/Azure_Route_Server_Meraki_vMX

However, I tested another option that seems to work. I used a solution which is used all the time with 3rd party network virtual appliances in Azure: a load balancer.

I deployed an Azure load-balancer in "NVA" mode. Basically, it load-balances all traffic it receives on its front-end IP to the backend VMs ( the 2 Meraki ). To check if the Merakis are up and running, I configured a probe on the Azure Load balancer that does a http get on TCP port 80 of the Meraki ( local status page of the Meraki ) . If responds with a 200 OK, it means the Meraki is up and running. I tested and the local status page is only reachable on the private IP, not on the public IP. Using traceroute on different VMs in the spokes, I can see that the traffic is load balanced across to the 2 Azure Merakis. One VM is routed to Meraki 1 while the other one is routed through Meraki 2. I use srcip / port as the load-balancing scheme on the Azure load balancer. When I shut down one of the Meraki, the load-balancing http probe fails and the load-balancer will not forward any traffic anymore to that failed Meraki. Failover takes about 15 seconds. The moment, I start again the Meraki, the probe will start working again and the load-balancer will start using it again.

Another advantage is that the throughput can scale horizontally ( add additional vMX ), at least outbound from Azure ( Azure -> on-prem ). Return traffic ( on-prem -> Azure ) will always use the Azure MX which is configured as the preferred one ( concentrator priority ).As you may know, only medium Azure vMX is supported for now.

Anyone experience with this setup ? Would also like to get some feedback from Meraki if this is a supported setup. It looks to be working fine and I find this a better solution then the Azure Function solution. I think the E-BGP setup is still the best solution ( not tested that one yet ).

Regards,

Kurt

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I like your thinking!

There is only one case I can think of where it won't work - and that is if AutoVPN firewall rules are defined. The firewall rules are stateful.

If a connection is initiated for a resource via the first VMX, the reply traffic has to come back via the same VMX. If the reply traffic comes back via the second VMX, it won't have an entry in its stateful firewall table to allow that traffic, and it will get blocked.

You may not be able to horizontally scale either. If you use this approach, you would have to commit to never being able to add an AutoVPN firewall rule to control traffic in and out of Azure.

I'm going to take a look into it further, especially the BGP approach. Using BGP it should be possible to guarantee symmetric traffic flows (by always making one VMX the primary).

I also need to determine if the extra complexity added is worth it compared to the simplicity of a function.

But great thinking! Thanks for posting this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Agree, if you use firewall functionality ( which is not the case for me ) on the Meraki it will not work because of asymmetric flows. The BGP approach is the better solution then as you can prefer on Vmx over the other ( as prepending, ... ) . I did not test the Azure Function yet, not sure how fast it converges the routing. Unfortunately, Azure load-balancer does not support active / passive mode (yet). It will use all available hosts in the load-balancer pool.

-

3rd Party VPN

174 -

ACLs

91 -

Auto VPN

305 -

AWS

36 -

Azure

70 -

Client VPN

381 -

Firewall

863 -

iOS

1 -

Other

554 -

Wireless LAN MR

1