- Technical Forums

- :

- Switching

- :

- VPLS Speed Issue

VPLS Speed Issue

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

VPLS Speed Issue

Morning all. I'm hoping some folks have their ears on.

We finally were able to get our VPLS circuit working between two of our plant. It's been one hell of a learning curve for me, but last night I was finally able to get everything working.

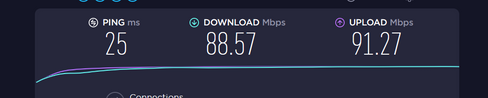

The only issue I am seeing is terrible speed over the circuit, but only in one direction - download. The circuit is 100 Mbps, but on iPerf, even with the window size increased to fill the pipe so it consumes the most bandwidth, we are only seeing speeds in the neighborhood 4 or 5 Mbps. Speedtest.net shows the same, around 5 Mbps down, and 85+ Mbps up.

Traceroute shows traffic taking the correct path. Pings work perfectly. But the speed is terrible.

The connection path is as follows, from our south plant to our main plant (datacenter):

Meraki Switch-->Cisco 2901-->VPLS Circuit-->Cisco 2901-->Meraki Switch Stack-->MX100-->Internet

Routing is being handled via OSPF with Layer 3 Switching on the stack. I have default routes on the Cisco 2901s and the stack has a default as well. OSPF neighbor relationships are up and correct. The route tables are correct.

South Plant default route is 0.0.0.0 0.0.0.0 172.16.50.1 (IP of the 2901 VPLS interface at Main Plant)

Main Plant default route is 0.0.0.0 0.0.0.0 10.0.0.2 (Gateway/SVI interface of the 10.0.0.0/22 network)

Stack default route points to the MX IP of the Transit VLAN.

Main Plant 2901 has the following config:

Gig 0/0 172.16.50.1/24

Gig 0/1 10.0.0.3/22

We have full connectivity over the VPLS between both plant (finally!), but the speed is bad. The 2901s have their VPLS interfaces set to 100/Full.

I know there are quirks of Meraki when it comes to reachability of SVI interfaces, especially in terms of ping, tracroute, etc, but they still work correctly.

What I am wondering is this: since the default route of the Main Plant 2901 points to the SVI of the 10.0.0.0/22 network, would this cause issues with speed since SVIs are notoriously unreachable? I tried pointing the default route to the IPs of the Transit VLAN instead, both on the stack and on the MX, but that brought the Internet connection for south plant down completely.

I'm guessing that since routing works fine despite the ping issue with the SVI, pointing a default route to the SVI should work fine also? The default route on the 2901 certainly does it's job correctly. I just can't figure-out why the speed is so bloody awful.

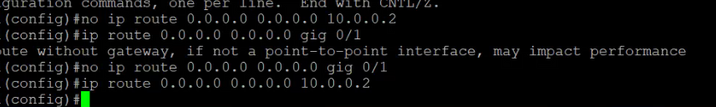

I attempted to point the Main Plant 2901 default route to the exit interface, but received the following message about the route not having a gateway, and this also took down the South Plant internet connection:

Has anyone else experienced this issue with circuit speed when a default route points to an SVI? Or a speed issue in general when connecting Meraki gear to traditional Cisco routers?

I have a ticket in with the circuit provider.

Thanks!!

Twitch

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Twitch we ran VPLS networks with 28xx, 29xx and 43xx IOS routers for years with no issues, we now have MXs and 3850s terminating the VPLS tails. At the sites with MXs (that used to have IOS routers) all devices have SVIs as their default gateways so I don't think that is the issue.

Any chance you can plug a PC in either end and do a performance check, if good then move to the LAN side of the 2901s etc.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@cmr- Good to know that your setup works and is similar to ours.

I can try your suggestion, but not until the dsy shift stops working later this afternoon.

A few weeks ago with nothing but the two 2901s connected to the VPLS and laptops connected to the internal LAN port (Gig 0/1), iPerf was running at ~95 Mbps consistently.

Thanks for the reply!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Twitch so it looks like the circuit was at least initially proven to be working as hoped!

If that test works again then I'd plug back in the south plant switch and test from a PC there

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

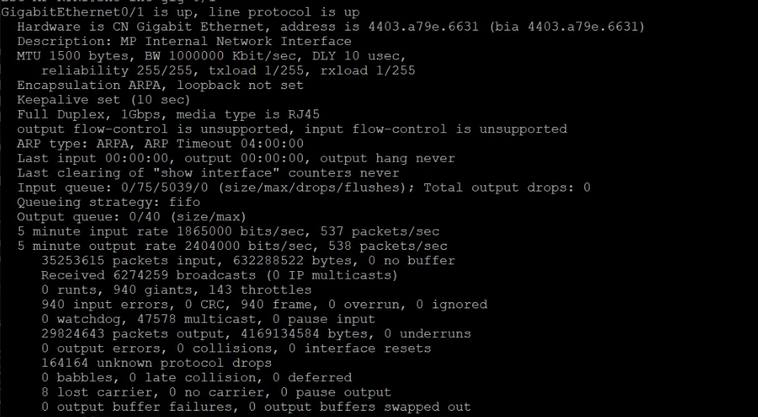

@cmr @KarstenI - This is interesting. Screenshot from the main plant 2901 interface Gig 0/1, which connects to the switch stack. Take note of the interface errors, particularly the Input Errors and the Unknown Protocol Drops. I wonder if something is not talking correctly between the stack and this interface?

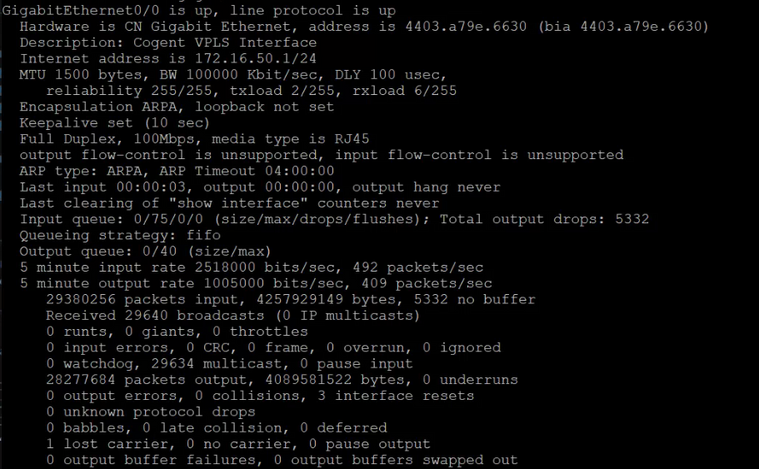

In contrast, the Gig 0/0 VPLS interface is looking good:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is definitely a lot of congestion showing from the LAN side, but that might be due to spikes over what the WAN can deliver, though only some of those errors look related to that.

You could try clearing the counters if the router hasn't been rebooted recently, in case some are old. Also you *could* take the LAN down to 100/Full so the router isn't doing the buffering.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@cmr @KarstenI - Turns-out that our last mile circuit provider had their end of the circuit at the demarc configured wrong. As soon as they fixed it, speeds became normal.

So, at this point, our first VPLS connection is up-and-running successfully.

I'm going to go home and get some sleep now.

Cheers!

Twitch

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Pointing a route to the interface is almost always a bad idea if the link is not point-to-point. The router needs to ARP for every address that needs to flow through the link. It's always better to point the route to the real next hop. If you lose your connection with that, there needs to be something else that is running wild. I would typically run a routing-protocol through the VPLS. That makes it also easier to deal with backup-links if you have these.

For the interface settings with 100/Full: Did the ISP tell you to do that? And what are the interface statistics?

Next question: What is the role of the 2901? Is there a reason that you don't connect the switch directly?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @KarstenI - we have OSPF running on the VPLS links. That is the reason for putting the 2901s at the edge of each remote site.

We tried plugging directly to the stack way back when the circuits were first put in. Our thought was to run VLAN tags over the VPLS between each site, but we have basic circuits that can't pass VLAN tags.

The 100/Full requirement was set by the circuit provider. If it is set to anything else, including autonegotiate, the circuit won't connect correctly.

What is your setup to have the circuit connected to the Meraki directly?