- Technical Forums

- :

- Security & SD-WAN

- :

- Site-to-site VPN links resetting/failing on MX85 hub.

Site-to-site VPN links resetting/failing on MX85 hub.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Site-to-site VPN links resetting/failing on MX85 hub.

WE have just swapped out infrastructure from VMX100 in Azure to VMX-M, and MX100 in our HQ to MX85 (both VPN hubs), and now we are seeing some rather strange issues. We have an inhouse software running ODBC connections to an azure database, so it's very sensitive to connection changes, and this is where the problem got discovered. In the VPN "graph" everything looks to be just fine:

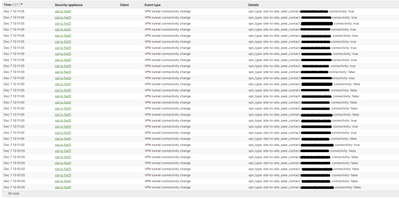

Some outages related to an ISP issue and so on, but nothing too dramatic. However the logs on the MX85's in this hub tell a different story:

This goes on and on, with random intervals, but allways with 5 seconds of "reset time".

Firmware is uptodate and ISP claims no errors on the WAN side is detected.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What device is connected to the MX85 WAN port? You appear to be having random interface flaps that only last a second or two from what I see.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Our ISP's CPE is connected there, and the link flaps corresponds with what they are seeing on the LAN side of that. What is causing these link flaps is the mystery. When we fail over to our hot spare which is connected to another port on the CPE the flaps continue. I am going to try to remove the hot spare and create a tst network on our backup ISP's CPE (same type of CPE) to atleast identify if this is a Meraki or and ISP/CPE issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you don't already have a Support case open please open one so eng can look at this

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Volcomer how many users and VPN tunnels are there? It is only recommend to have a max of 100 tunnels and 250 users for an MX85, whereas I think the MX100 supported about double of each. Remember if you have dual ISPs at a site then that counts as two tunnels.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

52 site to site tunnels and about 100 users, so well within the range.

I will know more tomorrow. I have support cases going with both Meraki and our primary ISP, as well as setting up a test network now which will go into effect tomorrow when I can physically change the uplinks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you leave a long-running ping to your cloud environment, and also to 8.8.8.8 what does it look like?

Check the security event log. Make sure some security event is not being triggered.

In Azure, the VMX and your virtual servers need to be in different VNETS otherwise you often get low levels of intermittent packet loss (like 5%). Are they in different VNETS?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"In Azure, the VMX and your virtual servers need to be in different VNETS otherwise you often get low levels of intermittent packet loss (like 5%). Are they in different VNETS?"

Wait what? is this documented or a known issue? We just put up a new VMX in a different availability zone so that one is on a different VNET, but I did not know that this needed to be the case for same zone setups.

I don' have a long time running ping to a VM in azure, but I just set one up so we'll see. The long time running to 8.8.8.8 is just fine. Just low level ( < .6%) loss on rare spikes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK, so this doesn't seem to happen in my test network hub, so I need to change gears and look elsewhere for the source of the issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you have any updates? I am currently experiencing the same problem. Between a MX250 and MX84.

Ping between the 2 are around 120-150 ms.

If i ping 8.8.8.8,

From the MX250: 5-10 ms

From the MS84: 5-10 ms

Which tells me it is not my ISP but something between the 2 MX's

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In my case this seems to be caused by a bad batch of network cables(!). When swapping gear I also bought new cables, and one of the bags seems to contain some bad cables. First time for everything I guess, also teaches me a lesson for being cheap and buying "off brand" cables. When I removed the hot spare and set it up as a test network I also changed cable color to help with cable management, which is why it worked. I swapped out the original cables, and, for now at least, everything is quiet.

-

3rd Party VPN

169 -

ACLs

101 -

Auto VPN

314 -

AWS

39 -

Azure

70 -

Client VPN

431 -

Firewall

713 -

Other

593