- Technical Forums

- :

- Security & SD-WAN

- :

- Re: MX Circuit Failover timing

MX Circuit Failover timing

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

MX Circuit Failover timing

Hey techies.

We're suggesting an SDWan package for a potential cx, and their requirements doc includes a question I haven't come across before... nor can I find support for in the meraki docs.

Device failover based on VRRP is three missed heartbeats... 3 seconds.

A circuit failing DNS/Ping tests is 300 seconds / 5min.

But if WAN1 physically goes down (ie, the ISP CPE device dies, or I physically pull the cable)... is it instantaneous failover to WAN2? I hope so. Is that documented anywhere?

Much appreciated,

Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, it does fail over immediately, though I'm not seeing that in any docs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for confirming.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It definitely does fail over instantly, if you use load balancing it fails all new streams over pretty much instantly too. Even on HA failover there is little if no downtime.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Mike_Robo_SK earlier answers are correct that in your scenario if WAN1/primary goes down the fail over is immediate and not based on VRRP heart beats.

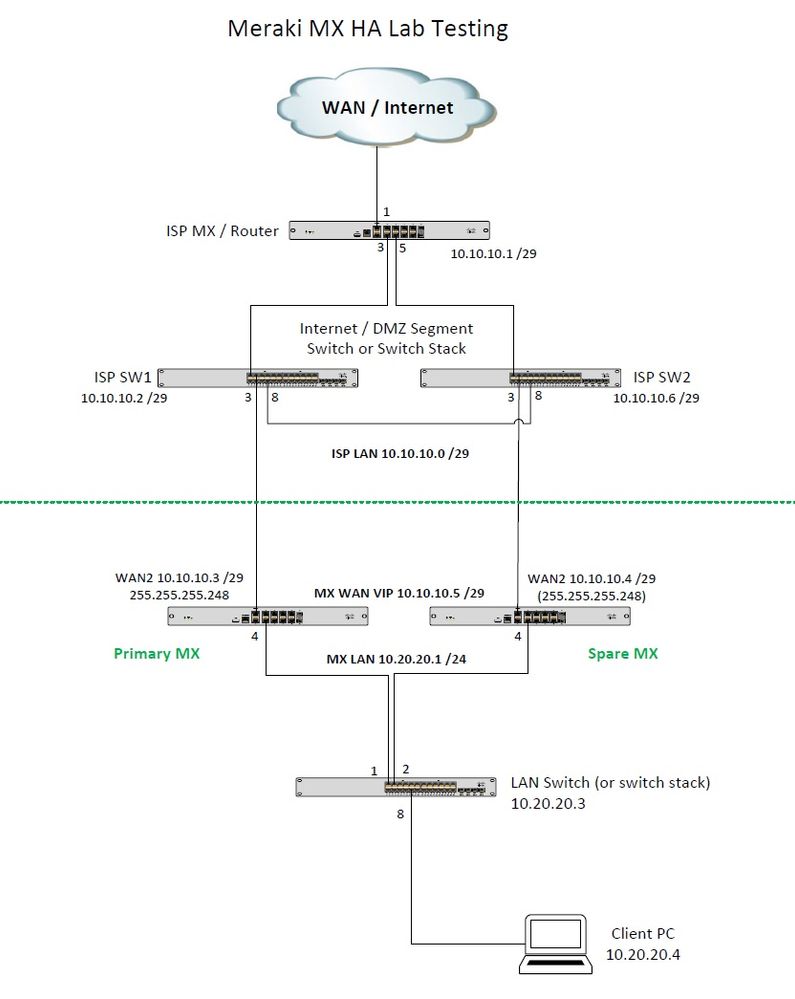

Don't consider this official Meraki documentation, but I have documented some of this for some of my own customers. Here's an image attached and the set of notes to go along with it. I used a separate set of switches and MX appliance to simulate the "ISP" above the green line, and the "customer" site below the green line. I will PM you the pcap file referenced in the notes.

The HA failover is based on 3 missed VRRP heartbeats (3 seconds), and when the primary MX is back to normal the spare (now the master) will relinquish its master role back to the primary.

The diagram shows what I had in my home lab with the equipment I had available, which very closely approximates the actual deployment and was sufficient for testing the different failure scenarios. The tests are listed below.

On the diagram, the top half above the green line simulates the ISP/Internet connectivity, whatever that might be. I used a Meraki MX connected to my home LAN behind my ISP cable modem, and created a /29 VLAN on the LAN side of the MX. The switches I'm using for ISP SW1 and SW2 are just MS120 switches, not physically stackable, so I have them connected port 8 to port 8, so just think of that as a switch stack or your Catalyst core chassis. Port 3 of each ISP switch connects down to WAN1 on the primary MX and spare MX. Then LAN port 3 of each MX connects down to a LAN switch with a client PC on it. I didn't have any extra switches on the LAN side, but that single physical LAN switch could be a switch stack as well and it would all work and behave the same.

Had the client PC running continuous pings to 8.8.8.8 while testing different failure scenarios as follows:

A: Power loss on spare MX: No impact, no effect.

B: Power loss on primary MX: Hard failure, HA failover time < 5 seconds (PC dropped 1 ping)

C: ISP SW1 power loss: Hard failure for primary MX (MX up & running but ISP down), HA failover time < 5 seconds (PC dropped 1 ping)

D. ISP SW1 to Primary MX cable cut: Exact behavior as test C

E: Spare MX LAN to ISP SW2 cable cut: Dual master situation but with no impact, no effect.

F: Primary MX LAN to ISP SW1 cable cut: Dual master situation with HA failover time < 5 seconds (PC dropped 1 ping)

G: ISP SW1 primary link to ISP router cable cut: Soft failure north of primary MX, STP convergence Port 1 root to designated and Port 8 alternate to root, 10 second outage (PC dropped 2 pings)

H: Simulate soft failure where primary MX unable to get out. This was the tricky one. Configured rules on the ISP MX FW to purposely interrupt multiple Internet connection monitoring tests for the primary MX but not the spare. Blocked ports 53 and 80 and 443 and all ICMP traffic from the 10.10.10.3physical IP on WAN1 of primary MX. There was a failover from primary to spare MX and the spare MX became the master within about 2 minutes, but there was no 5 minute outage, only 5 seconds during the HA failover, the PC dropped a single ping. Sure enough, the connectivity status for the primary shows a period of time when it was alerting with "bad connectivity". Just blocking DNS wasn't enough to force this, all connection monitor tests had to fail including pings and HTTP GETs, not just DNS alone. I repeated this test a few times to be sure.

I had one of my customers on a Webex call and reproduced these tests while screen sharing so they could see it happening in Dashboard in real time.

It's regular VRRPv3 on the LAN side of the MX, so the 2 MX appliances in the HA pair will share a single Virtual IP address and Virtual MAC address and those are the ARP entries that the downstream devices will see. So whichever MX is the active primary, the VIP and VMAC will float to that physical MX and send 3 sets of gratuitous ARPs one second apart which will get interspersed with the VRRP advertisements (also 1 second apart) prompting the HA failover to spare (or failback to primary).

See the .pcap, I pulled the power on my primary MX and then ran this capture while it was rebooting, it came back online during this capture around the 50-second mark.

Look at frame 335, which is the spare MX sending a VRRP advertisement. It's from the VIP 10.20.20.1and VMAC cc:03:d9:af:bc:e1 going to multicast address 224.0.0.18(01:00:5e:00:00:12) and the key thing is to look in the VRRP header and notice the priority field is only 235 indicating it's a non-default backup priority.

It was about 500 milliseconds later when the primary MX came back online, and immediately sent a gratuitous ARP for the VIP (frames 338 and 339). The very next VRRP advertisement (frame 340) shows a Priority field of 255 (primary MX now owns the VIP).

Additional Gratuitous ARPs were sent (frames 343/344 and 346/347) and were likely redundant, it does this 3 times, one second apart, to be purposely interleaved with at least two of the 1-second VRRP advertisements, and allowing for at least one dropped or corrupted frame, just in case. You can also see the primary MX ARP for the laptop PC (10.20.20.4) in frame 341, because it had just rebooted and needed that entry.

Hope that helps!

I'll PM you the .pcap

Regards,

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I suggest that @MerakiDave's post be copied directly over to doumentation.meraki.com

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

-

3rd Party VPN

170 -

ACLs

101 -

Auto VPN

314 -

AWS

39 -

Azure

71 -

Client VPN

431 -

Firewall

715 -

Other

594