Get answers from our community of experts in record time.

Join now- Technical Forums

- :

- Developers & APIs

- :

- Rate limit of 10 is not enough when using multiple integrations

Rate limit of 10 is not enough when using multiple integrations

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Rate limit of 10 is not enough when using multiple integrations

I am starting to have customers using multiple Meraki integrations. Once they get to about 3 "full-time" integrations, it starts getting hard running operational scripts (scripts you only run from time to time, rather than 24x7), or integrations that collect data become unable to keep up with the data flow.

It is getting to the point where a customer might see integration on places like https://apps.meraki.io/ (especially because Meraki has been marketing them), and I have to tell them not to get it because the strain on the rate limit might break or harm existing integrations they already have.

@John_on_API , I know the limit was only "recently" increased, but we need a more fundamental change.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It should scale per network or something like that.

It doesn't make any sense that a large Org like us ( 2K+ networks ) have the same rate-limit as the small enterprise that has 1-2 networks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Per-licenced device might be safer. The business case is simple then. Internally they could say 1% of device licencing (made up number) is to cover the cost of API calls against that device. Then there is a direct revenue/cost business case. Hell - the API could even be run as a profit centre using this business model. Now there is a way to more directly fund API concurrent call capacity.

@John_on_API , can you remind me who the API product manager is please.

Otherwise, people like me would just create dummy networks until I got the required rate limit I wanted. I have in the past split an organisation into multiple organisations to get a higher limit - but you can't do that very often.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @PhilipDAth for this feedback. I like what you've written about "more fundamental change" which we're excited to speak more about in the future, with a specific focus on enabling business outcomes and reducing the burden on the customer to manage API call budgets regardless of the apps they integrate. There are lots of options available to us, so stay tuned.

For the apps they're integrating, do you happen to know if they are leveraging ecosystem partners? We want customers to be successful with their integrations regardless of the number adopted, but are more equipped to support those from official ecosystem partners.

Re: the existing budget, which leads the industry by a fair margin (and keep me honest here), it's not usually obvious that increasing this number is the best area of investment. I'm happy to report that we see very few instances of customers consistently hitting their call budgets regardless of the number of integrations they deploy in a single organization, even with huge deployments. The data shows that the concerns about not realizing business outcomes are usually theoretical.

Whenever we hear "10 calls/second isn't enough" we want to make sure we understand the concern, and that usually means getting right down to specific scenarios where this pops up. We have worked closely with customers, partners and ecosystem candidates when the concern has been more acute, and we have observed that the current budgets are usually more than adequate to deliver the desired business outcomes. For example, for an org (864,000 API calls per day), even if that org uses four apps, that's 216K calls per app per org if and only if every app is trying to fully consume the budget all of the time or at the same time.

For what it's worth, sometimes the app simply isn't managing its budget effectively. This is a more common problem with apps from developers who are not in our ecosystem, but we've seen it even with other Cisco applications. It might not leverage the most efficient API calls for a given purpose (e.g. getDevice once per device rather than getOrganizationDevices once per org), or it might not cache any data locally, or it might call some operations far more often than necessary (e.g. running getOrganizationNetworks or getOrganizationPolicyObjects 10,000 times in a day for a single org). Some of these resources simply do not change that frequently even during active deployment operations. Or an application might not be leveraging the right API for the job (dashboard API is popular but we also have webhooks, scanning API, MQTT, etc.).

It's also important for ecosystem partners to be transparent with their customers about their budget consumption and provide built-in controls for the apps to rate limit themselves. Some of our partners even expose to the customer a configurable rate limit for their application to ensure that it plays nicely with the shared budget. But again, we want to reduce the burden on the customer to even care about API call budgets, so stay tuned.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just checked https://apps.meraki.io/, and for the customer I most recently ran into the issue, two of the apps are listed (so must be ecosystem partners) are listed, and one of them is not listed.

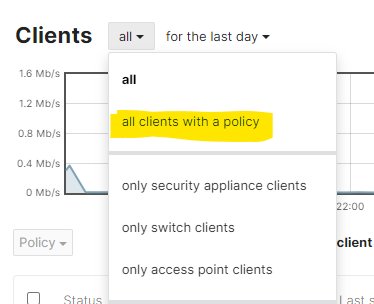

This customer has around 250k clients in their organisation. Most recently, they wanted to know the list of all clients with a particular group policy applied. That requires an API call for everyone one of those clients. I was using asyncio, and paralysing as much as possible - but it took my script three days to run. Three days!

The rest of the API capacity must have been used by the other integrations.

The only other APIs I use are Amazon AWS and Azure AD - and I have lever hit a rate limit with them.

My experience is narrow, but the Meraki API is the only one I have to struggle with getting an API call to execute.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for that detail, @PhilipDAth ! Does that actually require a single call per client?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That does, unfortunately. There are no APIs to say "give me everyone in the network/org with group policy x" (the Dashboard has this option to display only clients with a group policy). There is only an API to return the group polic(ies) attached to a client.

To match the dashboard, an API to return everyone client that has any kind of group policy attached would be handy.

I'm starting to lean more towards that we need a graph/query based API. Then you don't have to imagine all the kinds of things users might want to do.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, for "API v2" I agree moving to a query-based approach would be good.

Over time the v0/v1 approach results in a lot of endpoints, with overlap/inconsistency, and the need to sometimes use multiple endpoints to make what really could be a single query drives up the call rate.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you only need the clients that have group policies applied, doesn't https://developer.cisco.com/meraki/api-v1/#!get-network-policies-by-client cover this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried that API initially. I got back an error that the network was not a "Systems Manager" network.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hm, that's definitely not intentional. Did you happen to report this to Meraki Support?

There's also a newer version of this endpoint in beta, with details in the Early Access community.

Either way, once working, this sounds like it would get the job done in a fraction of the API calls!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Negative. I just assumed the API was designed to only work with System Manager clients, and the policies that can be applied to them.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think the action batch feature in the Meraki API is a valuable option to consider when developing third-party integrations. It can be utilized to circumvent rate limitations and improve overall efficiency. By grouping multiple API requests into a single batch, developers can optimize their interactions with the Meraki API and reduce the chances of hitting rate limits.

However, it would be beneficial if Meraki offered a dedicated solution to monitor API calls, including the ability to track the history of calls and errors. Such a monitoring feature would greatly assist developers in identifying and resolving errors during the development process. Having visibility into the API call history and error logs would enhance troubleshooting capabilities and contribute to smoother integration experiences.

By implementing comprehensive monitoring and error tracking, Meraki can empower operators to identify issues more efficiently, ultimately improving the integration process and facilitating faster resolution of any problems that may arise.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Fyi there's already an endpoint for tracking API usage - we use it for a few purposes.

https://developer.cisco.com/meraki/api-v1/#!get-organization-api-requests

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would like to inform you that this methodology has a limitation. For instance, if we encounter the issue of exceeding the API rate, this call will not function properly. Consequently, we will be unable to effectively monitor the ongoing activities.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You do not use this call in real time. Once a day, or hour, it's a negligible impact on call rate.

The correct way to use any API call is within the rate limit wait on retry mechanism, this is built into the Python library, just enable it, it works very well. If coding the API calls direct, see the API documentation on how to correctly handle 429 responses.

Even if I issue a thousand API calls 'at once' using async, massively exceeding the rate limit, the result will be that the 429 errors are transparently caught and retries made as necessary, as far as my Python script is concerned all the calls succeed, it never got rate limited 😀

If you want real time details of API calls/responses, I would say the correct approach is to log them from the calling process.

-

Bluetooth

3 -

Captive Portal API

11 -

Code Sample

63 -

Dashboard API

376 -

Getting Started

10 -

Other

6 -

Python

13 -

Scanning API

28 -

Updates from Meraki

50 -

Webhooks

19 -

Webinars

6