- Technical Forums

- :

- Cloud Security & SD-WAN (vMX)

- :

- Re: vMX and Client VPN on Microsoft Azure

vMX and Client VPN on Microsoft Azure

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

vMX and Client VPN on Microsoft Azure

So I'm having som issues with enabling Client VPN on a vMX.

I have enabled Client VPN on the vMX, like I've done many time before, double checked users and shared secret but I just can not seem to get the ClientVPN connected.

My vMx is deployed and online and all green. However, I'm not able to ping the Public IP, but then again I'm not sure if I'm supposed to be able to ping a Public IP in Azure.

I'm testing from my own Mac, and it seems like it can't reach my vMX or the Public IP, which I find odd. I've tried from Home WiFi and Mobile Hotspot.

Log from my Mac:

standard 08:26:41.543802+0100 racoon plogsetfile: about to add racoon log file: /var/log/racoon.log

standard 08:26:41.548326+0100 racoon accepted connection on vpn control socket.

standard 08:26:41.548367+0100 racoon received bind command on vpn control socket.

standard 08:26:41.549439+0100 racoon New Phase 2

standard 08:26:41.549691+0100 racoon state changed to: IKEv1 quick I start

standard 08:26:41.551206+0100 racoon Connecting.

standard 08:26:41.551662+0100 racoon IPsec-SA request for 52.146.154.95 queued due to no Phase 1 found.

standard 08:26:41.551690+0100 racoon New Phase 1

standard 08:26:41.551717+0100 racoon state changed to: IKEv1 ident I start

standard 08:26:41.552022+0100 racoon initiate new phase 1 negotiation: 192.168.1.53[500]<=>52.146.154.95[500]

standard 08:26:41.552161+0100 racoon begin Identity Protection mode.

standard 08:26:41.552201+0100 racoon IPSec Phase 1 started (Initiated by me).

standard 08:26:41.554889+0100 racoon Resend Phase 1 packet f465dd260cf2a981:0000000000000000

standard 08:26:41.554918+0100 racoon state changed to: IKEv1 ident I msg1 sent

standard 08:26:41.554953+0100 racoon IKE Packet: transmit success. (Initiator, Main-Mode message 1).

standard 08:26:41.554996+0100 racoon >>>>> phase change status = Phase 1 started by us

standard 08:26:42.551901+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:43.651998+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:44.750392+0100 racoon IKE Packet: transmit success. (Phase 1 Retransmit).

standard 08:26:44.750534+0100 racoon Resend Phase 1 packet f465dd260cf2a981:0000000000000000

standard 08:26:44.750612+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:45.849827+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:46.949911+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:47.826864+0100 racoon IKE Packet: transmit success. (Phase 1 Retransmit).

standard 08:26:47.826919+0100 racoon Resend Phase 1 packet f465dd260cf2a981:0000000000000000

standard 08:26:48.049956+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:49.149791+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:50.249824+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:51.116931+0100 racoon IKE Packet: transmit success. (Phase 1 Retransmit).

standard 08:26:51.116967+0100 racoon Resend Phase 1 packet f465dd260cf2a981:0000000000000000

standard 08:26:51.349793+0100 racoon CHKPH1THERE: no established ph1 handler found

standard 08:26:51.561520+0100 racoon vpn_control socket closed by peer.

standard 08:26:51.561544+0100 racoon received disconnect all command.

standard 08:26:51.561578+0100 racoon IPSec disconnecting from server 52.146.154.95

standard 08:26:51.561608+0100 racoon in ike_session_purgephXbydstaddrwop... purging Phase 2 structures

standard 08:26:51.561635+0100 racoon Phase 2 sa expired 192.168.1.53-52.146.154.95

standard 08:26:51.561657+0100 racoon state changed to: Phase 2 expired

standard 08:26:51.561697+0100 racoon in ike_session_purgephXbydstaddrwop... purging Phase 1 and related Phase 2 structures

standard 08:26:51.562447+0100 racoon IPsec-SA needs to be purged: ESP 192.168.1.53[0]->52.146.154.95[0] spi=1224736768(0x49000000)

standard 08:26:51.562503+0100 racoon ISAKMP-SA expired 192.168.1.53[500]-52.146.154.95[500] spi:f465dd260cf2a981:0000000000000000

standard 08:26:51.562527+0100 racoon state changed to: Phase 1 expired

standard 08:26:51.562550+0100 racoon no ph1bind replacement found. NULL ph1.

standard 08:26:51.563059+0100 racoon vpncontrol_close_comm.

From what I see is that the vMX is not even responding. This is also evident from tha fact that there a nothing the the Event Log, about Client VPN establishement.

So I think(!) that there is a route missing from the Azure and into my MX, but I have no idea what that should be. My vMX can easily ping out. I can even ping my own public ip address at home.

I have created a Route Table, which only contains 1 route, the ClientVPN subnet pointing to the IP address of the vMX

I also have a VNet with the subnet of the vMX and ClientVPN, although I'm not certain this should be neccessary yet. Not untill I need to access services in Azure.

Both the Vnet and RouteTable are in the same Ressource Group as the vMX Managed App.

I did not allow the vMX Managed App to create VNets and Subnets. I did that on my own as well.

I've tried setting the ClientVPN on my Mac to both Full Tunnel, and Split Tunne, to no avail.

Looking at the NIC for the vMX VM, there are no NSGs applied, so that should be OK.

Any ideas on what I'm doing wrong?

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've also done a traceroute from my own mac. It seems that it ends somewhere in Microsoft Azure

rbnielsen@The-Gibson ~ % traceroute 52.146.154.95

traceroute to 52.146.154.95 (52.146.154.95), 64 hops max, 52 byte packets

1 192.168.2.1 (192.168.2.1) 4.767 ms 2.304 ms 2.222 ms

2 192.168.1.1 (192.168.1.1) 3.875 ms 2.720 ms 3.169 ms

3 lo0-0.vsk2nqe30.dk.ip.tdc.net (2.106.92.193) 8.201 ms 8.336 ms 8.652 ms

4 ae2-0.fhnqe11.dk.ip.tdc.net (83.88.16.207) 9.542 ms 8.354 ms 9.115 ms

5 ae1-0.alb2nqp8.dk.ip.tdc.net (83.88.19.119) 22.806 ms 16.014 ms *

6 104.44.37.83 (104.44.37.83) 18.571 ms 16.465 ms 25.737 ms

7 ae24-0.ear01.ham30.ntwk.msn.net (104.44.24.21) 21.265 ms 21.480 ms 22.874 ms

8 be-21-0.ibr02.ham30.ntwk.msn.net (104.44.21.17) 44.815 ms

be-20-0.ibr01.ham30.ntwk.msn.net (104.44.21.14) 45.915 ms

be-21-0.ibr02.ham30.ntwk.msn.net (104.44.21.17) 44.947 ms

9 be-4-0.ibr06.ams06.ntwk.msn.net (104.44.16.131) 45.002 ms 45.383 ms 44.945 ms

10 be-6-0.ibr02.ams21.ntwk.msn.net (104.44.18.190) 45.524 ms 46.605 ms 45.491 ms

11 be-9-0.ibr02.dub08.ntwk.msn.net (104.44.19.214) 45.360 ms

be-11-0.ibr02.lon24.ntwk.msn.net (104.44.16.2) 45.643 ms

be-9-0.ibr01.lon24.ntwk.msn.net (104.44.18.144) 174.930 ms

12 ae122-0.icr02.dub08.ntwk.msn.net (104.44.11.82) 45.882 ms

be-8-0.ibr02.dub08.ntwk.msn.net (104.44.17.90) 45.684 ms

ae122-0.icr02.dub08.ntwk.msn.net (104.44.11.82) 45.110 ms

13 ae120-0.icr01.dub08.ntwk.msn.net (104.44.11.86) 59.649 ms * *

14 * * *

^C

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You should be able to ping the public IP address of the VMX.

I'm guessing the Azure firewall rules are not allowing the traffic.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

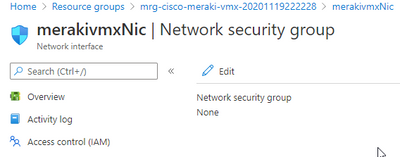

Network security group.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And in the NSG, there are no rules, whatsoever.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do I need to create a Firewall in Azure, in the same resource group as the vMX?

My vMX cam online and connected to the Dashboard without a Firewall in the first place.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No. There will be an existing NSG (network security group) controlling inbound access. You'll want to add icmp, udp/500 and udp/4500.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can't find any NSGs.

This is the only place I have, where I can choose Network Security Groups.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What happens if you edit it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not much.

Now.. This is a test setup, on a "Free Trial Version".

Could it be that I'm not being allowed the full feature set?

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You must need to define the network security group somewhere else then.

There are no restrictions on the trial version.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There really isn't any other place I can define the network security groups.

There's there Resource Group shown above with the VM, Nic, Public IP and Disk, and then there is an RG with the Managed App, VNet and a Routetable.Finally, there is a NetworkWatcherRG in which I can't really do any changes.

The 'mrg-cisco-meraki-vmx-XX' is the one that gets created when deploying the Meraki Managed App in Azure, and it seems to be Read Only, so I can't really modify its settings.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So, I dug a bit deeper into this, and had the opportunity to have a conference call both with Meraki Support, as well as Microsoft Azure Support.

It seems that the main reason why I couldn't get ClientVPN to work was because ports 500 and 4500 were being blocked. I was not able to open those ports by applying an NSG, due to a vendor policy from Meraki on the vMX RG.

Also, it seems that the Public IP SKU being deployed from the managed app, was randomly being chosen as a "Standard" IP SKU, which apparently has some default port blocked. If the deployed IP SKU is "Basic" ClientVPN will work.

The only way around this, is a redeployment of the vMX..

I'll probably add some more on this, after I've done some more testing, and sharing these results with Meraki Support.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi rbnielsen, your post is interesting. I'm facing the same issue with vMX and VPN Client.

The only way to make it work is with IP Basic SKU? Or, is there any other alternative?.

Please let me know.

Have a great day.

Regards.

David

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey,

As far as I know, there is no alternative. My ticket is still open with meraki development team, and there is still no update as of yet.

You'll need to deploy the vMX in Availability Zone "None" to get a Basic IP SKU, in order to get ClientVPN to work on a vMX.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@rhbirkelund, Did you manage to redeploy vMX? I faced the same problem and since the only workaround is redeployment I'd like to know if there is a recommended redeployment way. I noticed there were some problems with redeployments end up with the Meraki support team. The vMX is in a production environment in my case and there is not much tolerance to a support ticket response time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@UmutYasar, redeploying the vMX, isn't that huge of a deal. It's just deleting the managed app from Azure, and (if you feel like it) deleting the Ressource Group.

I have yet to find out if there is anything to do once, the vMX has been deployed and in production. Like I said - my ticket with Meraki Support is still open, waiting for them to determine a solution that doesn't neccessary require a redeployment.

Unfortunately, I suspect that is the only solution.

Like what you see? - Give a Kudo ## Did it answer your question? - Mark it as a Solution 🙂

All code examples are provided as is. Responsibility for Code execution lies solely your own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I deployed a vMX today and the default was the "Basic" public IP SKU so I didn't run into the UDP 500 and 4500 issue.

Client VPN was failing to connect to my vMX. I was getting a Windows Error 809. I fixed it following the instructions in this document:

https://documentation.meraki.com/MX/Client_VPN/Guided_Client_VPN_Troubleshooting#Windows_Error_809

- For Windows Vista, 7, 8, 10, and 2008 server:

HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\PolicyAgent

RegValue: AssumeUDPEncapsulationContextOnSendRule

Type: DWORD

Value data: 2

Base: Decimal

I hope this helps someone.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Same issue here, tried it 2 weeks ago, ran a packet capture and vMX was getting the traffic but couldn't connect.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Make sure you reboot after the registry change.

If it still doesn't work I suggest connecting to a phone hotspot too just to eliminate any packet issues from your local firewall or ISP.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Is there any alternative to redeploying the VMX please ?

I'm also in the same case. I have a Basic SKU on my public address.

Thank you !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is no other alternative. It has to be re-deployed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I contacted support last month.

I inquired whether the following matter is a bug or a specification.

[Topic Summary]

* If AZ is set to None, the Public IP SKU is deployed as Basic. Client VPN works when SKU is Basic.

* If AZ is set to 1 - 3, the Public IP SKU is deployed as Standard. Client VPN does not work when SKU is Standard.

This matter appears to be undetermined / undefined.

Therefore,...

It is not a bug, as it is not yet determined / undefined.

It is not a specification, as it is not yet determined / undefined.

Since it is neither a bug nor a specification, it does not appear to be documented.

I have provided feedback to be documented, but I don't know what will happen.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

They need to update the documentation to only say to use an AZ of "none".

-

3rd Party VPN

4 -

ACLs

1 -

Auto VPN

20 -

AWS

18 -

Azure

35 -

Client VPN

13 -

Cloud networking

5 -

Firewall

7 -

Hybrid Cloud

3 -

Other

12 -

Quick Start

1 -

Virtual firewall

8