- Technical Forums

- :

- Cloud Security & SD-WAN (vMX)

- :

- vMX 100 in Azure working as a hub but failing to pass traffic between other...

vMX 100 in Azure working as a hub but failing to pass traffic between other peered subscriptions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

vMX 100 in Azure working as a hub but failing to pass traffic between other peered subscriptions

Hi all, apologises for the rather large post but it's a complicated beast.

We have a vMX up and running in Azure (following the Meraxi Azure vMX guide) and it's happily passing traffic from our physical SD-WAN boxes into and out of a number of Azure subscriptions peered to it but as we're migrating more services into Azure I've run across an issue that's frankly driving me bonkers. Our setup looks a bit like this :-

Sub A <-- VNET Peering --> Sub B (The Hub, vMX lives here) <> SD-WAN to our on-premise locations

Sub C <-- VNET Peering --> Sub B

Sub D <-- VNET Peering --> Sub B

etc...

Our issue came to light as we started trying to access a service by passing through Sub B, for example a DNS server in Sub C. Traffic to or from the SD-WAN is no issue at all, but from Sub A or D the DNS traffic (or HTTP for a second simple test) goes to ground. Frustratingly, our trusted test tools ping and traceroute "just work"... Grrrr

If I spin up a temporary Linux box with HTTP and DNS in SUB B, Sub A, C and D can all reach it as well as any location connected over SD-WAN. Looking at the Effective Route Table for a server in Sub A, it seems traffic that's using the "Default" peer route out of Sub A to Sub B is happy, but traffic that's using the "User" route table to get it to the vMX in Sub B seems to fail. The vMX see the traffic arrive in a local packet capture but then it never reaches the destination or sees any response which makes me think that maybe the vMX is getting in the way but for the life of me I can't see how.

Of course the simple answer is just move the servers we need into Sub B but rebuilding our multi-layered ADFS setup in a new subscription (currently in a a Sub that's connected to our WAN using a very expensive ExpressRoute link we would like to kill) fills me with dread so I'd rather just peer it in but the DNS servers that also sit in that Sub are being relied on by the other Azure hosted subscriptions so everything dies when we do. "It's always DNS" after all.

I spent an hour and a half on a call with MS support this morning getting packet captures which seem to have failed to catch the traffic in question so while I try to work out why, I thought I'd ask the awesome Meraki community to see if anything jumps out at anyone.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>but traffic that's using the "User" route table to get it to the vMX in Sub B seems to fail. The vMX see the traffic arrive in a local packet capture but then it never reaches the destination or sees any response which makes me think that maybe the vMX is getting in the way but for the life of me I can't see how.

To be clear, on a packet capture on the VMX you see the traffic arriving from Sub B, but the VMX fails to forward it over AutoVPN to the final remote destination?

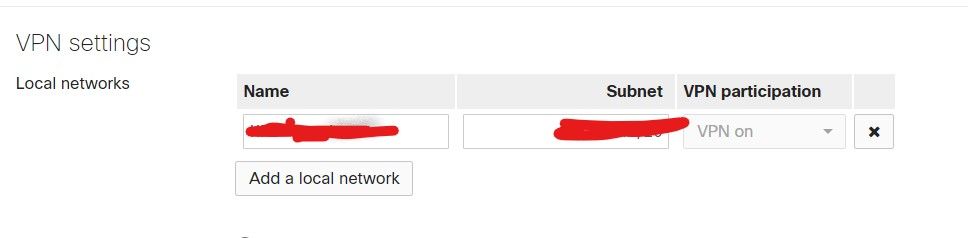

Does the VMX have routes in its local route table for the remote Azure subnets (like SubB)? This is under:

Security & SD-WAN/Site-to-Site-VPN.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Philip, thanks for the quick reply.

We have indeed. Each of the Azure subnets we're making available over SD-WAN has an entry on the vMX and for traffic to/from any of our SD-WAN office locations, to/from any of the Azure subscriptions, we have end to end connectivity passing through the vMX and onto the relevant peered subscriptions. Where we seem to be getting stuck is passing traffic between 2 subs that are peered with the vMX Sub B.

Each sub has a local route table attached to the relevant server subnet and these point to the LAN IP of the vMX as the next hop. The peering connection between the subs seems to make sure that connectivity is working. If I send traffic from Sub A to Sub C, the next hop leaving Sub A will be the vMX LAN IP and the vMX sees that traffic arrive in a packet capture but it never sees a response. The destination machine in Sub C (A linux box for simplicity, and tcpdump) never sees the packets arrive if they are coming from another Azure peered sub but has no issues if the source of traffic is from an SD-WAN connected location.

But, you may have just sent me in a different direction... The Meraki Azure vMX setup guide has you creating a route table to attach to the vMX subnet but it doesn't mention having to do that anywhere else. I've assumed, up to this point, that I would have to add a route table individually to each peered subscription subnet but I now wonder if in fact all I need is to activate the gateway transit option and don't try to force traffic across the connection with an attached route table. OK, that's at least something I can test, I think, without breaking too many things. But I need another coffee first.

Will report back as soon as I've updated things.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Having done a little more testing this morning, I can confirm that if I remove the user assigned route table from one of the peers, it's then only able to reach the internet or the hub subscription, thanks to the route put in place by Azure when the peering connection is brought up. Using the check box to allow "Configure gateway transit settings" make no difference to those routes. The last remaining peering option on the list is "Configure Remote Gateway settings" but that's greyed out because Azure doesn't believe the remote virtual network has a gateway. It seems the vMX isn't classified as a gateway. I'm still not sure if that would make a difference or not but I will continue to dig and Google.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've had this on the back burner for a couple of weeks for one reason or another but am back at it today.

Something I've noticed, and I don't remember seeing before, is that the vMX seems to be performing NAT on the traffic that passing between the subscriptions.

A quick recap, our network in Azure looks a little like this - Sub A > Sub B < Sub C. A and C are VNET peered to B and each have a local route table for our SD-WAN subnets associated pointing to the LAN IP of the vMX in Sub B.

Sub B hosts the vMX and is happily talking to our SD-WAN. It's also passing traffic from any client across the SD-WAN through SUB B and onto servers in Sub A or Sub C with no issues at all. Our problem lies in getting traffic between Sub A and Sub C.

What I noticed today is that although I can ping from A to C, the packet capture on the vMX as well as the destination server (tcpdump) shows it being NAT'd and the source showing as the vMX LAN IP instead of the source server. The source server gets a reply so on the face of it connectivity seems to be working but if I hop up the stack with an HTTP connection, the same NAT appears to happen on the vMX and I even see a reply to the vMX LAN IP but then the vMX seems to have no idea what to do it it and the traffic never reaches the initial source machine.

I didn't think a vMX in one armed concentrator mode (that's the only choice you get with a vMX in Azure) was supposed to perform NAT at all. It's really confusing.

-

3rd Party VPN

4 -

ACLs

1 -

Auto VPN

20 -

AWS

18 -

Azure

35 -

Client VPN

13 -

Cloud networking

5 -

Firewall

7 -

Hybrid Cloud

3 -

Other

12 -

Quick Start

1 -

Virtual firewall

8