- Technical Forums

- :

- Full-Stack & Network-Wide

- :

- Re: Switches/clients offline after MX250 failover (NAT HA setup)

Switches/clients offline after MX250 failover (NAT HA setup)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Switches/clients offline after MX250 failover (NAT HA setup)

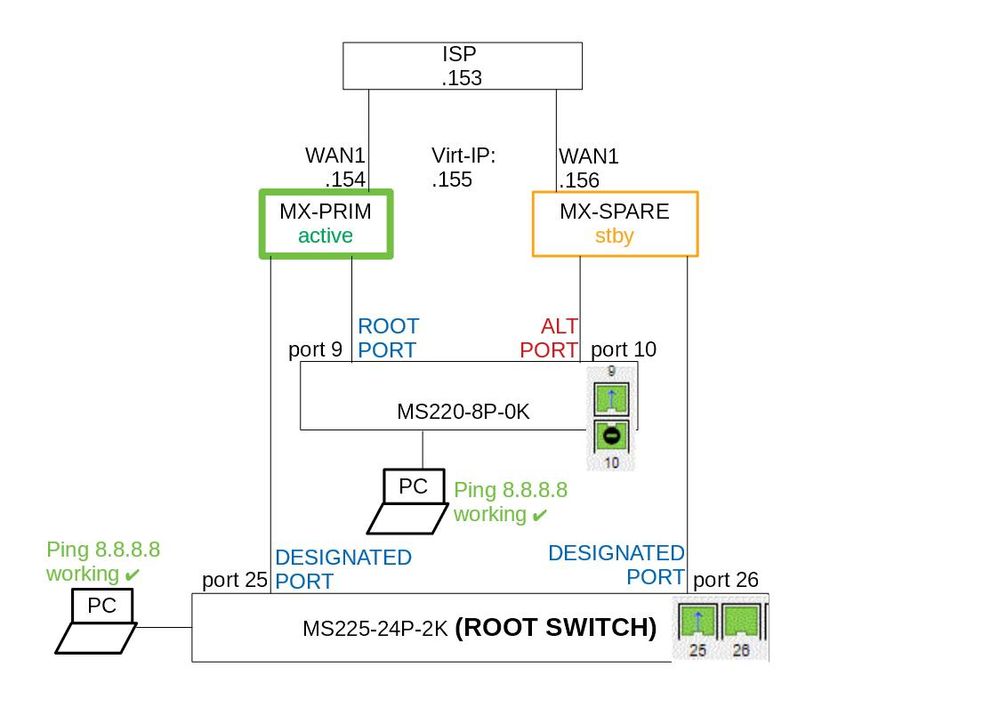

Hello. I have two MX250 firewalls set up in a NAT HA failover pair, using the network-connected design for VRRP heartbeats.

Both MX250s have one link connected to WAN1 in the same subnet and I'm using the Virtual-IP for client traffic headed to the internet.

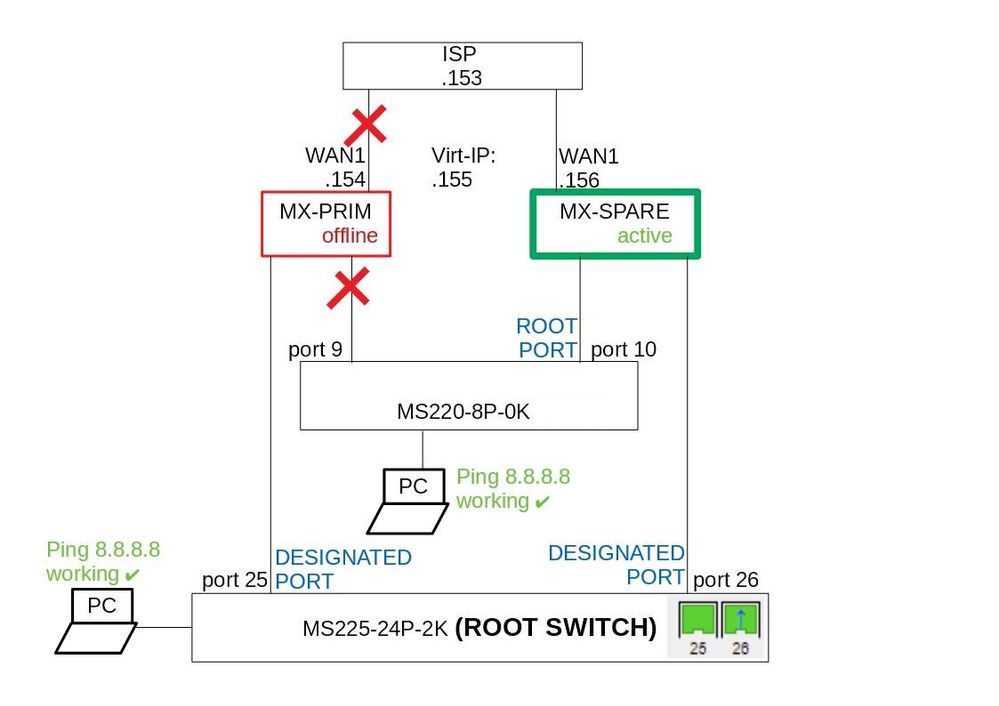

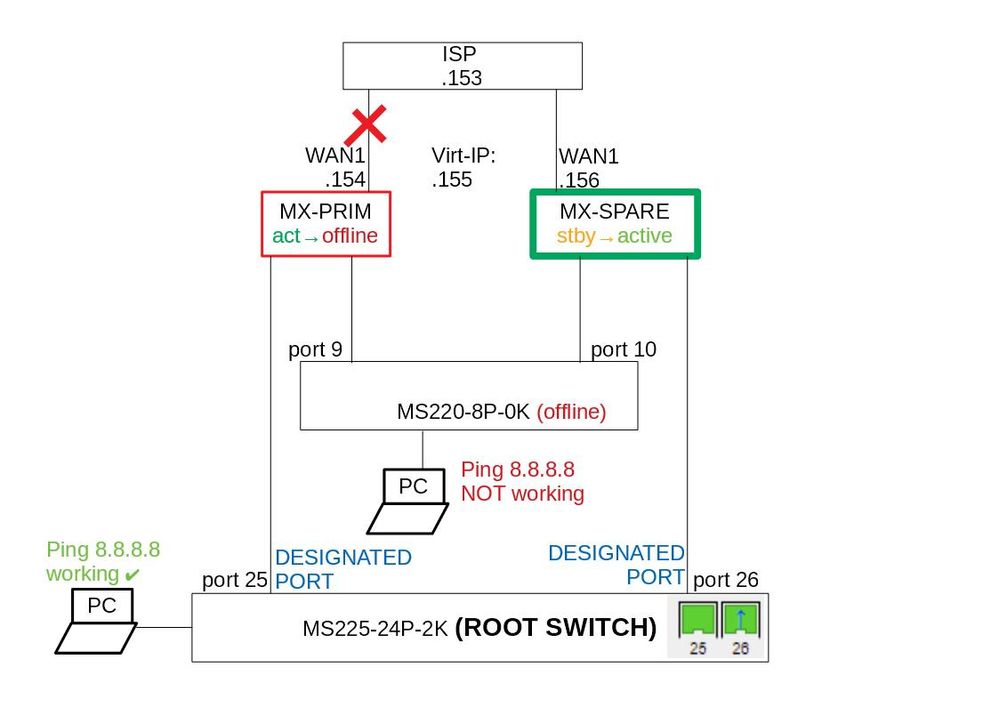

The problems start when I disconnect MX250-Primary-Master's WAN1: the MX250-Spare takes over the master role within seconds. However most clients and switches do not regain internet connectivity- the switches go offline and clients connected to switches have no internet, BUT with the exception of the root switch MS225-24P-2K. The root switch regains internet connectivity and clients behind root switch can also access the internet. But rest of the switches and clients are offline- can not even ping the gateway (gateways are in the MX250). I have included two illustrations of the working setup and the nonworking setup after MX250 failover. I also have an open case with Meraki but no solution yet.

Any ideas what is wrong? Thank you.

Best regards

Heiki

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What IP is set as the gateway for the MS220 and the MS225? I wonder if the MS220 is gateway'd to an interface that is only on the primary?

If this was helpful click the Kudo button below

If my reply solved your issue, please mark it as a solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Based on that diagram you kind of have two root switches since they both uplink directly to the MX cluster. Bummer we can't see what port 9/10 look like on the MS220 in the failed state. Maybe check what the ports on the MXs look like in the failed vs working state. It truly feels like STP isn't doing its job. May be worth going to Switch>Switch Settings and manually specifying a root bridge.

If this was helpful click the Kudo button below

If my reply solved your issue, please mark it as a solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I like where Adam is going with this because that's where my mind went at first too. But if you think about it, the LAN doesn't change when you disconnect the WAN link, so by all rights the STP topology should not be changing either. On the 8 port switch port 9 should still be forwarding, and port 10 should still be blocking. This makes the path to the gateway kinda wonky, but it _should_ still work. So I suspect something else is at play here (Maybe the now standby MX isn't forwarding ARP replies from the now active MX to the 8 port switch???).

I have a couple very similar topologies built, but we use the Meraki recommended practice of connecting the MX's directly.

I am not really a fan of this as the MX's don't participate in STP, but it does seem to work and fails things over correctly. While not providing an explanation to what you are seeing it should be a simple way to stabilize things so you actually have a viable failover.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jdsilva I personally find the published Meraki design (reproduced below) - does not take into account enough factors.

For example, if you are not using virtual IP the extra VRRP cables adds - nothing. But it does introduce several negative issues.

By having the VRRP cable in place you create a spanning tree loop, forcing spanning tree to block one of the forwarding paths. You often get the situation where the forwarding path is to the standby MX, which then has to forward everything again via the VRRP link. If the standby MX is rebooted (should be safe right - its a standby MX) you get an outage because spanning tree is blocking the other forwarding path. You have to then wait for spanning tree to recognise this and enable the forwarding path.

So that is the first two issues - having traffic forwarded via the standby for no good reason and reduced uptime through not being able to reboot the standby. Cisco Meraki could substantially mitigate the issues by having the MX speak rapid spanning tree - but it doesn't.

Lets consider the case where there is no VRRP cable (and no virtual IP configured):

Now there is no spanning tree loop. A connected switch will forward on both uplinks to the MX's. Yay - spanning tree issues are now gone.

Failing over between the MX's now happens as fast as a VRRP transition.

Your fail over cases are:

- Switch fails - your screwed, nothing can talk to anything.

- Primary MX fails, second MX takes over VRRP and users barely notice anything.

- Standby MX fails, users don't notice anything.

- Primary MX WAN links fail. Primary MX stops talking VRRP and standby takes over. Users barely notice.

- Standby MX WAN links fails. No one notices.

- LAN port on primary MX fails. Both MX's go master/master. If you are using a virtual IP you now have an outage. Otherwise users retain connectivity via standby MX. LAN port failures are rare compared to other failures.

- LAN port on standby MX fails. Both MX's go master/master. If you are using a virtual IP you now have an outage. However users retain connec. tivity via primary MX. LAN port failures are rare compared to other failures.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, previously I had the MXs directly-connected as Meraki documentation seems to be best practice.

However then I had other problems: when disconnecting any switches primary uplink (port 25) the switch and clients behind it lost connection to internet. What I found weird was that the root switch had one port in STP-blocking state which should not happen on a root switch (all ports should be deisgnated-forwarding, unless a loop exists). Googling around I found out that MXs do not participate in spanning tree, thus the MXs caused a spanning tree loop which causes the root switch to block one of its ports. I see that as bad design and suspected that it could cause problems (although spanning tree was working, loops were blocked). After removing the direct-link between MXs the problem was solved- root switch had both it's ports designated-forwarding and no more problems when removing a switch's primary uplink.

The problem of removing MX250-Primary's WAN1 uplink existed in both cases- directly connected and network-connected design.

Actually I have 5 switches connected to MX firewalls via 10Gbit links (all trunks with same VLANs), so the directly connected link actually achieves nothing (most likely at least one of five switches has both uplinks connected to Primary and Spare MX to transfer VRRP heartbeats in each VLAN). The direclty connected link would only be 1Gbit which would be a bottleneck if a switch's uplink to primary-active MX goes down ( solvable with a 10G twinax, although extra cost).

All switches have manually configured STP bridge priorities (of which none are equal: each switch has unique priority).

For some reason the traffic does not flow from nonroot-switch -> MX-Prim (offline) -> Root-switch -> MX-Spare-master.

One more diagram- if I disconnect a switch's primary uplink port9 (root port) then port10 goes from ALT-> Root port and the switch regains connectivity to cloud/internet. This does not explain much.

I am thinking of powering down the entire Meraki network of switches and MXs and then booting them up. Maybe it will resolve some quirks. I did have switches and MXs firmwares recently upgraded but I believe the firmware upgrade process rebooted each device.

Thank you all for input.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did some packet captures and I can see that broadcast/multicast traffic reaches from client to the new active MX firewall successfully (ARP queries are replied by new active MX)... however unicast traffic does not even reach the root switch. The traffic path is the following:

ClientPC -> AP -> accessswitch -> MX250-Prim-offline -> RootSwitch -> MX250-Spare-ACTIVE

I will wait and see what the support thinks about this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@heiki I'd forgotten about this thread! Oddly enough, I've also been revisiting the topic of MS-MX Internet Edge this week and I have a discovery that I think might be related here.

I have observed that when an MX is in standby mode it WILL NOT forward frames that have the destination MAC address set to the address of the VRRP virtual router.This effectively black holes all traffic that is sent to the default gateway.

All other traffic seems to forward normally. You should be able to ping both switches, and the other clients from your client that cannot reach the Internet. At least I could in my testing. If you can't I'd be interested to know that.

Side Note: Meraki does not follow the RFC and uses the physical MAC address of the Primary MX as the MAC address of the virtual router. This means that when the Standby MX becomes Master it responds to the physical MAC of the Primary MX. You can observe this on the clients in the ARP table as the MAC for the GW will be the MAC of the Primary MX, and will not change when you initiate a failover.

**Edit** Meraki actually uses a Meraki OUI based virtual MAC that both MX share.

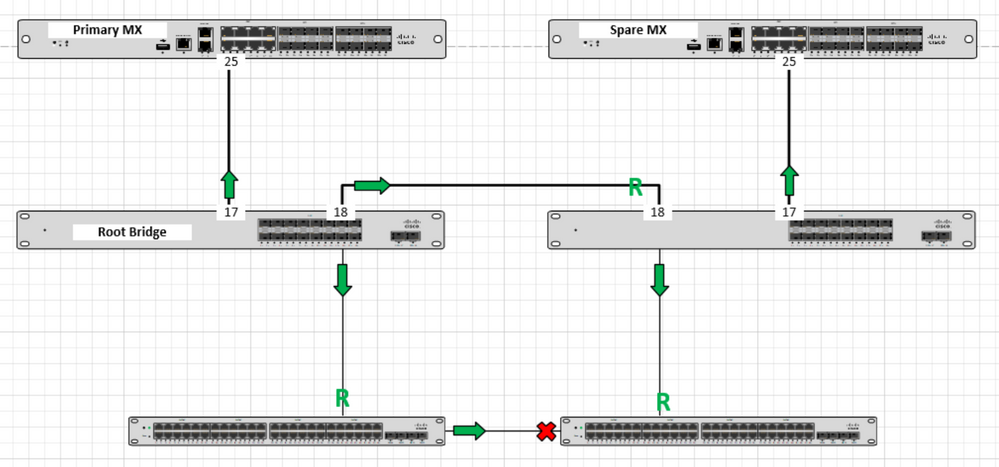

So, where does this leave us? Well for me I'm going to change the way I connect this stuff up such that there is never a path to the Active MX that is through the Standby MX. This will eliminate the black holing of traffic that occurs when traffic has to go through the Standby MX. I think that this topology is our best bet going forward:

I will likely open a case on this too since this is incorrect behavior. I'm less concerned about the improper VRRP operation than I am about the Standby MX not forwarding traffic for one specific MAC address. You can't run VRRP with another device anyway so it's not that big a deal that Meraki has played fast and loose with the RFC (there's other things they didn't follow as well).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For us it is not easy to change the connections- all optical patches from switches run straight to the firewalls. No way to connect switches with eachother 🙂

I will let you know if I get answer back from Meraki's support.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@heiki There's another way you can manipulate this... When there's a failure, if you were to swap port 25 and 26 on the Root Bridge, then things would start working again. By swapping the ports you are forcing the disconnected switch to break the tie for it's root port in favour of the path through the secondary, and now active, MX.

I realize this is likely not useful at all... But I noticed this in my testing so I thought I'd mention it to you as a last resort to get things working if there was an outage.

@PhilipDAth I am not a fan of a topology where multiple switches uplink to both MX's. I think that introduces the potential for STP to become unstable with a topology that has a mesh of neighbours connected over PtP interfaces. I get the sense that's one of those things that would work fine... Until it doesn't. Just my thoughts though.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The problem is you need a loop. So you either need a link between the two MX's, or the two MX's dual connected to each switch.

Consider the case with a WAN VIP configured (as is this case).

If you loose the link between the primary MX and the switch it will remain active on the outside, because it can talk to the cloud. You are now dead, because all return traffic will now go to the primary MX which has no way to forward it to the internal network.

it also breaks HA NAT, completely.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@PhilipDAth Crap. Good point.

I see the easiest way out here (in the topology I put forward) to simply stack the distribution switches. However, between here and Reddit I've read too many stacking horror stories to comfortably move forward with that option...

Unfortunately, there's real problems with the dual attached option as well, as illustrated by @heiki in this thread. If you ever have a failure such that traffic must pass though a Standby MX to reach the Master MX that traffic is dead in the water.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jdsilva if you use my medthod, where each MX is dual connected to each switch, you will never get the case where traffic will flow through the standby MX to get to the primary.

It solves that case as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ps. Stacking more than 4 switches still makes me nervous. However the 10.x software train (marked as beta) seems to have gotten these issues sorted now. I'm finding the 10.x beta software better than the 9.x software.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@PhilipDAth That's not true at all. heiki's example above shows exactly how that can happen.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Meraki support was not of much help. Basically they require for a distribution switch to be the root switch which is directly connected to both active and spare MXs. And all other switches must be connected to the distribution (root) switch... as in the Meraki documentation: https://documentation.meraki.com/MX-Z/Deployment_Guides/NAT_Mode_Warm_Spare_(NAT_HA)

I did not get a clear answer from Meraki if my deisgn is to be supported somewhere in the future or not.

I guess the only way getting this to work is redesigning current setup. I know I won't be buying a couple of MS425-32 switches to support all the 10Gbps links from other switches 😄

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@heiki wrote:Meraki support was not of much help. Basically they require for a distribution switch to be the root switch which is directly connected to both active and spare MXs. And all other switches must be connected to the distribution (root) switch... as in the Meraki documentation: https://cheapessaywriter.meraki.com/MX-Z/Deployment_Guides/NAT_Mode_Warm_Spare_(NAT_HA)

I did not get a clear answer from Meraki if my deisgn is to be supported somewhere in the future or not.

I guess the only way getting this to work is redesigning current setup. I know I won't be buying a couple of MS425-32 switches to support all the 10Gbps links from other switches 😄

You're right buying MS425-32 switches to support all the 10Gbps links from other switches isn't the smartest idea. I think you should read through this link https://www.willette.works/mx-warm-spare/ if you're considering redesign of a setup.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@paulholloway201 wrote:

You're right buying MS425-32 switches to support all the 10Gbps links from other switches isn't the smartest idea. I think you should read through this link https://www.willette.works/mx-warm-spare/ if you're considering redesign of a setup.

Nope. That design is broken and should not be used.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jdsilva. I typically connect each MX to each switch. If you want to do HA NAT you need some kind of loop in this area.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

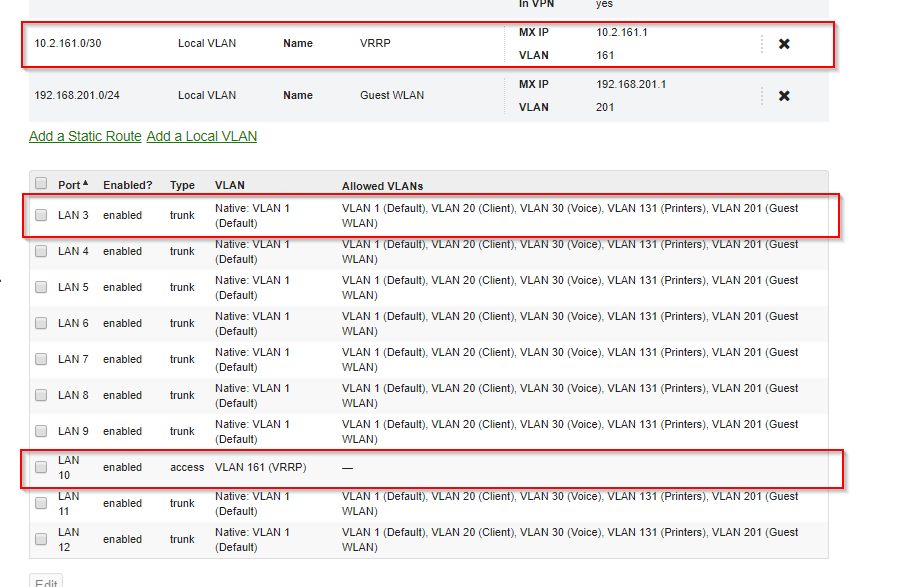

We (as an MSP) went through the same questions as you are describing here. Basically the thing bothering us is the loop you create if you follow the Meraki documentation regarding NAT Warm Spare.

Then we followed this: https://willette.works/mx-warm-spare/

And it's basically the missing piece in the documentation. What we did after reading the blog is:

* Shut down the link between the MXs (which was a trunk, allowing all VLANs and since the MX don't do STP basically creating a loop).

* Then create a VLAN on the MX with /30 addressing (like 10.1.1.0/30) and configure the link between the MXes as a pure access port (not trunk) with that VLAN (let's say it's VLAN 161)

Now this VLAN is purely for VRRP communication between the MXes, nothing else. It serves as a way to avoid dual-active scenarios.

* Then prune that VLAN 161 from all other ports, especially those connecting to the switches.

* Prune this VLAN on the switches too connecting to the MXs (just to be sure).

* Then we re-enabled the access port connecting both MXs directly (port 10)

Downstream Switches are usually a stack in our setups unless there's only one single switch of course. We experienced a few problems with stacks too in the earlier firmwares (like 9.32 for example), but with the current stable version (9.36) everything's working as expected, 10+ not so much. That switch stack is usually the root unless the design warrants other switches to be STP root.

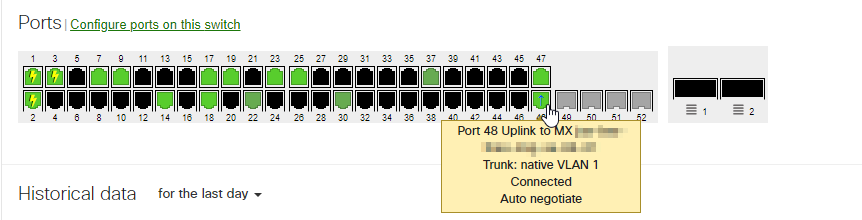

If configured that way you see no blocked ports on the switches (ports 47 and 48 are the uplinks to each MX):

Important note:

To avoid having outages in case a single ISP fail, always connect both ISPs to both MXs. This requires at least 2 IP addresses in the same subnet, or even better: use virtual IPs, but you would need at least a /29 per ISP to do it.

The only issue with this design is when you have a problem with the link from the master MX to the switch downstream or from one single MX to the ISP. I think this is very rare but would like to know more if you have concerns regarding this design.

IMHO the Meraki documentation should mention this (prune VRRP VLAN, don't create a loop). In theory a loop should work too since STP would block one of the ports, but it creates all sorts of quirky issues with such a design according to our experience with Meraki.

This setup (without a loop, but a dedicated VRRP VLAN between the MXes) works best for us currently.

HTH 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@hoempf on this, would the switch ports on the stack be configured in an aggregate?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@MoBrad If you mean the switchports connected to the MX LAN interfaces, then no 🙂