- Technical Forums

- :

- Documentation Feedback

- :

- Re: A possible typo in MV sense MQTT documentation

A possible typo in MV sense MQTT documentation

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

A possible typo in MV sense MQTT documentation

Hello,

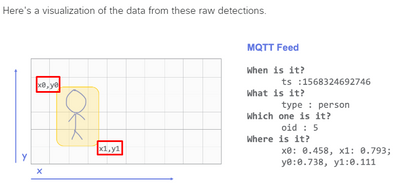

We are working on an upcoming integration using MQTT and came across a possible typo in the MQTT protocol but aren't quite sure if it's a typo or our understanding of how coordinates are handled by Meraki. The response parameters and the visual diagram contradict each other. Anyone who has worked with this please clarify.

Link: https://developer.cisco.com/meraki/mv-sense/#!mqtt/raw-detections-a-list-containing-object-identifie...

Under response parameters it mentions the following:

| x0, y0 | The x, y coordinates corresponding to the lower-right corner of the person detection box. They are proportional distances from the upper-left of the image. |

| x1, y1 | The x, y coordinates corresponding to the upper-left corner of the person detection box. They are proportional distances from the upper-left of the image. |

For the visuals diagram it mentions x0,y0 as top-left and x1,y1 as bottom-right corner

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Agree they can't both be right. Using the "Where is it" example as the arbiter, I'm thinking the diagram is right and the test in the table is wrong. I'm looking into confirming this with the product team.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posting an update on the solution we opted for to move forward until it's clarified by the Meraki product team. We have to crop an image received by the Snapshot API endpoint based on the MQTT person detection coordinates. Below is a sample code function we are using to not rely on the assumption, but instead implement the logic of how image coordinates work.

from PIL import Image

def img_crop(img_path, box):

img = Image.open(img_path)

# note img.size in PIL returns width first

w, h = img.size

# here we find the min/max of image coordinates to avoid ambiguity

xmin=min(box['x0'], box['x1'])

xmax=max(box['x0'], box['x1'])

ymin=min(box['y0'], box['y1'])

ymax=max(box['y0'], box['y1'])

# calculate the crop_region based on image dimensions (width, height)

crop_region = (xmin*w, ymin*h, xmax*w, ymax*h)

# we found MV detects the body to identify a person but in our case

# it is crucial to have a face so we scale the region using below

# this could be applied based on your requirements

# crop_region = (xmin*w*0.75, ymin*h*0.75, xmax*w*1.25, ymax*h*1.25)

# crop expects (left, upper, right, lower)

return img.crop(crop_region)

if __name__ == '__main__':

box = {

'x0': 1.0,

'x1': 0.89,

'y0': 0.861,

'y1': 0.546

}

croped = img_crop(img_path='test_img12.jpg',box=box)

croped.save('croped.jpg')